There are basically two different ways to build collections for distant reading. You can build up collections of specific genres, selecting volumes that you know belong to them. Or you can take an entire digital library as your base collection, and subdivide it by genre.

Most people do it the first way, and having just spent two years learning to do it the second way, I’d like to admit that they’re right. There’s a lot of overhead involved in mining a library. The problem becomes too big for your desktop; you have to schedule batch jobs; you have to learn to interpret MARC records. All this may be necessary eventually, but it’s not the ideal place to start.

But some of the problems I’ve encountered have been interesting. In particular, the problem of “dividing a library by genre” has made me realize that literary studies is constituted by exclusions that are a bit larger and more arbitrary than I used to think.

First of all, why is dividing by genre even a problem? Well, most machine-readable catalog records don’t say much about genre, and even if they did, a single volume usually contains multiple genres anyway. (Think introductions, indexes, collected poems and plays, etc.) With support from the ACLS and NEH, I’ve spent the last year wrestling with that problem, and in a couple of weeks I’m going to share an imperfect page-level map of genre for English-language books in HathiTrust 1700-1923.

But the bigger thing I want to report is that the ambiguity of genre may run deeper than most scholars who aren’t librarians currently imagine. To be sure, we know that subgenres like “detective fiction” are social institutions rather than natural forms. And in a vague way we also accept that broader categories like “fiction” and “poetry” are social constructs with blurry edges. We can all point to a few anomalies: prose poems, eighteenth-century journalistic fictions like The Spectator, and so on.

But somehow, in spite of knowing this for twenty years, I never grasped the full scale of the problem. For instance, I knew the boundary between fiction and nonfiction was blurry in the 18c, but I thought it had stabilized over time. By the time you got to the Victorians, surely, you could draw a circle around “fiction.” Exceptions would just prove the rule.

Selecting volumes one by one for genre-specific collections didn’t shake my confidence. But if you start with a whole library and try to winnow it down, you’re forced to consider a lot of things you would otherwise never look at. I’ve become convinced that the subset of genre-typical cases (should we call them cis-genred volumes?) is nowhere near as paradigmatic as literary scholars like to imagine. A substantial proportion of the books in a library don’t fit those models.

Consider the case of Shinkah, the Osage Indian, published in 1916 by S. M. Barrett. The preface to this volume informs us that it’s intended as a contribution to “the sociology of the Osage Indians.” But it’s set a hundred years in the past, and the central character Shinkah is entirely fictional (his name just means “child.”) On the other hand, the book is illustrated with photographs of real contemporary people, who stand for the characters in an ethnotypical way.

After wading though 872,000 volumes, I’m sorry to report that odd cases of this kind are more typical of nineteenth- and early twentieth-century fiction than my graduate-school training had led me to believe. There’s a smooth continuum for instance between Shinkah and Old Court Life in France (1873), by Frances Elliot. This book has a bibliography, and a historiographical preface, but otherwise reads like a historical novel, complete with invented dialogue. I’m not sure how to distinguish it from other historical novels with real historical personages as characters.

Literary critics know there’s a problem with historical fiction. We also know about the blurry boundary between fiction, journalism, and travel writing represented by the genre of the “sketch.” And anyone who remembers James Frey being kicked out of Oprah Winfrey’s definition of nonfiction knows that autobiographies can be problematic. And we know that didactic fiction blurs into philosophical dialogue. And anyone who studies children’s literature knows that the boundary between fiction and nonfiction gets especially blurry there. And probably some of us know about ethnographic novels like Shinkah. But I’m not sure many of us (except for librarians) have added it all up. When you’re sorting through an entire library you’re forced to see the scale of it: in the period 1700-1923, maybe 10% of the volumes that could be cataloged as fiction present puzzling boundary cases.

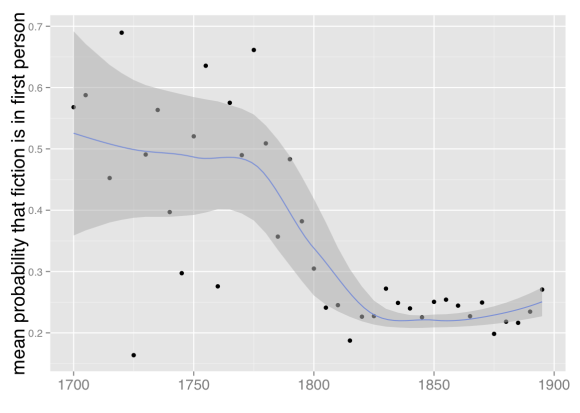

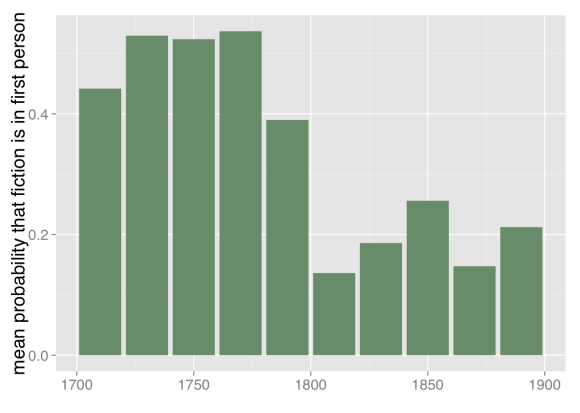

You run into a lot of these works even if you browse or select titles at random; that’s how I met Shinkah. But I’ve also been training probabilistic models of genre that report, among other things, how certain or uncertain they are about each page. These models are good at identifying clear cases of our received categories; I found that they agreed with my research assistants almost exactly as often as the research assistants agreed with each other (93-94% of the time, about broad categories like fiction/nonfiction). But you can also ask a model to sift through several thousand volumes looking for hard cases. When I did that I was taken aback to discover that about half the volumes it had most trouble with were things I also found impossible to classify. The model was most uncertain, for instance, about The Terrific Register (1825) — an almanac that mixes historical anecdote, urban legend, and outright fiction randomly from page to page. The second-most puzzling book was Madagascar, or Robert Drury’s Journal (1729), a book that offers itself as a travel journal by a real person, and was for a long time accepted as one, although scholars have more recently argued that it was written by Defoe.

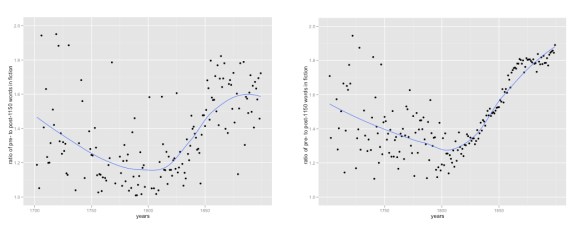

Of course, a statistical model of fiction doesn’t care whether things “really happened”; it pays attention mostly to word frequency. Past-tense verbs of speech, personal names, and “the,” for instance, are disproportionately common in fiction. “Is” and “also” and “mr” (and a few hundred other words) are common in nonfiction. Human readers probably think about genre in a more abstract way. But it’s not particularly miraculous that a model using word frequencies should be confused by the same examples we find confusing. The model was trained, after all, on examples tagged by human beings; the whole point of doing that was to reproduce as much as possible the contours of the boundary that separates genres for us. The only thing that’s surprising is that trawling the model through a library turns up more books right in the middle of the boundary region than our habits of literary attention would have suggested.

A lot of discussions of distant reading have imagined it as a move from canonical to popular or obscure examples of a (known) genre. But reconsidering our definitions of the genres we’re looking for may be just as important. We may come to recognize that “the novel” and “the lyric poem” have always been islands floating in a sea of other texts, widely read but never genre-typical enough to be replicated on English syllabi.

In the long run, this may require us to balance two kinds of inclusiveness. We already know that digital libraries exclude a lot. Allen Riddell has nicely demonstrated just how much: he concludes that there are digital scans for only about 58% of the novels listed in bibliographies as having been published between 1800 and 1836.

One way to ensure inclusion might be to start with those bibliographies, which highlight books invisible in digital libraries. On the other hand, bibliographies also make certain things invisible. The Terrific Register (1825), for instance, is not in Garside’s bibliography of early-nineteenth-century fiction. Neither is The Wonder-Working Water Mill (1791), to mention another odd thing I bumped into. These aren’t oversights; Garside et. al. acknowledge that they’re excluding certain categories of fiction from their conception of the novel. But because we’re trained to think about novels, the scale of that exclusion may only become visible after you spend some time trawling a library catalog.

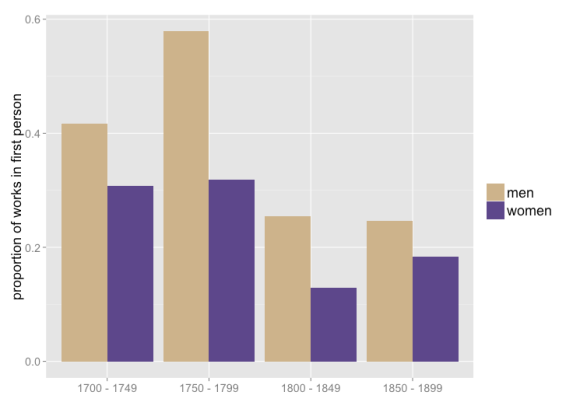

I don’t want to present this as an aporia that makes it impossible to know where to start. It’s not. Most people attempting distant reading are already starting in the right place — which is to build up medium-sized collections of familiar generic categories like “the novel.” The boundaries of those categories may be blurrier than we usually acknowledge. But there’s also such a thing as fretting excessively about the synchronic representativeness of your sample. A lot of the interesting questions in distant reading are actually trends that involve relative, diachronic differences in the collection. Subtle differences of synchronic coverage may more or less drop out of questions about change over time.

On the other hand, if I’m right that the gray areas between (for instance) fiction and nonfiction are bigger and more persistently blurry than literary scholarship usually mentions, that’s probably in the long run an issue we should consider! When I release a page-level map of genre in a couple of weeks, I’m going to try to provide some dials that allow researchers to make more explicit choices about degrees of inclusion or exclusion.

Predictive models that report probabilities give us a natural way to handle this, because they allow us to characterize every boundary as a gradient, and explicitly acknowledge our compromises (for instance, trade-offs between precision and recall). People who haven’t done much statistical modeling often imagine that numbers will give humanists spuriously clear definitions of fuzzy concepts. My experience has been the opposite: I think our received disciplinary practices often make categories seem self-evident and stable because they teach us to focus on easy cases. Attempting to model those categories explicitly, on a large scale, can force you to acknowledge the real instability of the boundaries involved.

References and acknowledgments

Training data for this project was produced by Shawn Ballard, Jonathan Cheng, Lea Potter, Nicole Moore and Clara Mount, as well as me. Michael L. Black and Boris Capitanu built a GUI that helped us tag volumes at the page level. Material support was provided by the National Endowment for the Humanities and the American Council of Learned Societies. Some information about results and methods is online as a paper and a poster, but much more will be forthcoming in the next month or so — along with a page-level map of broad genre categories and types of paratext.

The project would have been impossible without help from HathiTrust and HathiTrust Research Center. I’ve also been taught to read MARC records by librarians and information scientists including Tim Cole, M. J. Han, Colleen Fallaw, and Jacob Jett, any of whom could teach a course on “Cursed Metadata in Theory and Practice.”

I mention Garside’s bibliography of early nineteenth-century fiction. This is Garside, Peter, and Rainer Schöwerling. The English novel, 1770-1829 : a bibliographical survey of prose fiction published in the British Isles. Ed. Peter Garside, James Raven, and Rainer Schöwerling. 2 vols. Oxford: Oxford University Press, 2000.

Paul Fyfe directed me to a couple of useful works on the genre of the sketch. Michael Widner has recently written a dissertation about the cognitive dimension of genre titled Genre Trouble. I’ve also tuned into ongoing thoughts about the temporal and social dimensions of genre from Daniel Allington and Michael Witmore. The now-classic pamphlet #1 from the Stanford Literary Lab, “Quantitative Formalism,” is probably responsible for my interest in the topic.