Literary critics have been having a speculative conversation about close and distant reading. It might be premature to call it a debate.

A “debate” is normally a situation where people are free to choose between two paths. “Should I believe Habermas, or Foucault? I’m listening; I could go either way.” Conversation about distant reading is different, first, because there’s not much need to make a choice. Have any critics stopped reading closely? A close reading of The Bourgeois suggests that Franco Moretti hasn’t.

More importantly, this isn’t a debate yet because most of the people involved aren’t free to explore both paths. So far only a tiny number of scholars have actually tried distant reading, and it’s easy to see why. You can wake up tomorrow and try a Foucauldian reading of Frankenstein, but you can’t wake up and trace patterns of change in a thousand novels. In either case, you may need to learn new methods, but in the “distant” case, it can also take years to assemble a collection of texts.

A dataset for distant reading

To reduce barriers to entry, I’ve collaborated with HathiTrust Research Center to create an easier place to start with English-language literature. It’s aimed at scholars studying long-nineteenth-century (1750-1922) fiction and poetry, but it will gradually expand into the twentieth century. This post describes the humanistic uses of the dataset; if you want technical information, there’s more on the page where the data actually lives.

HathiTrust contains more than a million volumes in English between 1700 and 1922. Contractual agreements make it hard to share the texts themselves in bulk, but many of the questions that can be posed “at a distance” can be posed just as well using simpler representations of the texts — for instance, by counting the words they contain. To support this project, HathiTrust Research Center has extracted page-level word counts for 4.8 million volumes; scholars who are interested in the highest level of detail should go directly to their data.

However, many literary scholars are mainly concerned with books in a particular genre — they limit their inquiries, say, to “poetry” or “prose fiction.” Finding those needles in a five-millon-volume haystack is not easy. Many books in this period don’t carry genre tags; even when they do, volumes are heterogenous things. A volume of poetry, for instance, may begin with a prose life of the author and end with publishers’ ads.

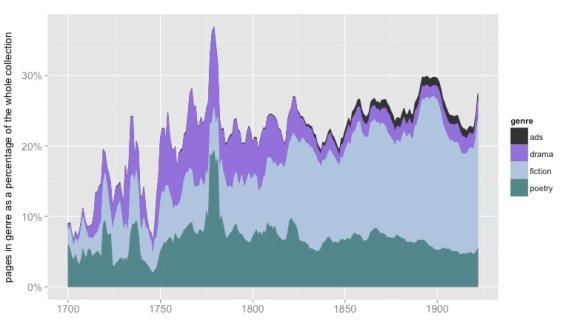

To create datasets that reliably track a single genre, we need page-level metadata. The National Endowment for the Humanities and the American Council of Learned Societies funded a year-long project to create that metadata. (The methods involved are described in a white paper on “Understanding Genre,” along with information about accuracy.) Now, by pairing this metadata with HTRC’s page-level wordcounts, I’ve created three genre-specific datasets of word counts covering poetry, fiction, and drama from 1700 to 1922. (Coverage is relatively sparse before 1750; if you need the early eighteenth century, you might want a resource like ECCO-TCP instead of or in addition to this.)

The collection consists of word counts for 101,948 volumes of fiction, 58,724 volumes of poetry, and 17,709 volumes of drama, aggregated at the volume level and including only pages identified as belonging to the relevant genre. I’ve collected these volume-level files in tar.gz chunks by genre and date, and have provided basic metadata for them all. You can use the volume IDs to view the original texts on the HathiTrust website if you need to read them closely. I’m calling this a “collection” rather than a “corpus” because I don’t necessarily recommend that you use the whole thing, as is. The whole thing may or may not represent the sample you need for your research question. What it represents is, “American university and public libraries, insofar as they were digitized in the year 2012 (when the project began).” For some big diachronic questions, that’s a good sample; for other questions, you’ll need to be more selective.

Because this is a very large collection, it’s likely in any case that the sample you need for your research may be contained somewhere within it. To address some questions, you might even select several samples and contrast them. To understand the history of literary prestige, for instance, Jordan Sellers and I gathered 360 prominent books of poetry by finding reviews in literary magazines and extracting the corresponding books from HathiTrust; we then contrasted that to a sample of 360 more obscure volumes selected from the whole HathiTrust collection of poetry. Just using volume-level wordcounts for those two samples, we were able to draw inferences about the way diachronic literary change is related to synchronic prestige.

Well-known texts may be represented in this dataset by dozens of reprints. For some questions, that may be exactly the sort of “weighted” sample you want; for other questions, you’ll want to winnow each title down to a single early example. More datasets may be developed to help you do that.

Distant reading rarely means “big data”

I realize the practice described above (selecting samples of a few hundred or a few thousand books to address particular questions) doesn’t line up with the version of distant reading currently circulating in public imagination. Isn’t the point of distant reading to construct a massive database that includes “everything that has been thought and said”? The Nation recently said so, and also warned us that “in reality, servers powerful enough to process big data can only be located in a highly select number of well-endowed institutions.”

That sounds grim, but I’m happy to report that it’s also malarkey. You can download this dataset, and process it, on your laptop. It’s true that I used our campus cluster to create it (because I had to manage a terabyte of text). But a) managing a terabyte won’t put a hole in most endowments, and b) you don’t need to do that anyway. Once nonfiction is set aside, we’re talking about a smaller group of books (compressed, this whole dataset runs to about 5GB). A well-designed sampling strategy can make it even smaller.

Wait, what’s this about “sampling”? aren’t distant readers supposed to claim to have everything? Not really. In the early days of distant reading, Franco Moretti did frame the project as a challenge to literary historians’ claims about synchronic coverage. (We only discuss a tiny number of books from any given period — what about all the rest?) But even in those early publications, Moretti acknowledged that we would only be able to represent “all the rest” through some kind of sample.

Fifteen years later, it’s becoming clear that distant reading has a lot of applications that aren’t about synchronic completeness at all. Expanding the diachronic scope of our research can be an equally important source of discovery. Certain kinds of change only become visible when you compare many examples across long timelines. Even if we restricted a digital corpus (say) to the academic canon, or to a thousand bestsellers, computational analysis would allow us to see long-term changes that aren’t visible to casual recollection.

It’s true that distant readers will often want to have the biggest possible table of metadata, so that our sampling strategies aren’t unduly constrained. But from that table, we may only sample a few hundred or a few thousand titles to address any single question. This scale of inquiry is not, in any meaningful sense, “big data.” (In fact, I doubt the phrase “big data” is often very meaningful, but that’s another story.) It’s a larger sample than literary scholars have usually attempted to describe, but it would not greatly distress our neighbors in linguistics and sociology.

How hard is this to use?

Of course, we’re not linguists or sociologists, so there is going to be a learning curve involved when we apply quantitative methods on any scale. The main dataset I’m providing here includes 178,381 separate files — one file for each volume. This is not something that can be sliced easily using a tool like Excel. Someone involved with the project needs to be able to program in order to pair the metadata table with the files.

On the other hand, there may be some questions that can be answered with a simple yearly summary, so I’ve also provided yearly_summary tables for each genre that aggregate term frequencies for the 10,000 most common tokens in each genre (selected by document frequency). This is the gentlest on-ramp to the dataset; data in this form probably can be sliced with Excel; to make it even easier I’ve also gone ahead and applied OCR correction and spelling normalization to those tables.

But the yearly_summary table aggregates all the volumes in the collection, and (as I’ve stressed) you may not want all of them. This dataset is a roughly-hewn, but very large, block of stone. You may be able to find the corpus you need somewhere within it, but decisions about selection are yours to make. Over the course of the next two years I hope to extend coverage further into the twentieth century; it is not illegal to share word counts from texts still covered by copyright. If you’re interested in more complex kinds of distant reading where word order matters, you can contact the HathiTrust Research Center; they are creating a workflow that can handle more complex kinds of computational analysis.

Postscript: We’ve done a lot of testing, but this is still a beta release. General estimates about error are summarized in “Understanding Genre”. Precision in these datasets is higher than 97%, but that still means there will be hundreds of volumes and thousands of pages mistakenly included. If you notice systematic problems with the data, please send feedback to the e-mail address provided in the data description. But individual misclassified volumes are not problems we’re likely to fix on a case-by-case basis; that sort of problem will be addressed by improving our methods in our next release.

5 replies on “A dataset for distant-reading literature in English, 1700-1922.”

[…] than actual or, even, polemical; contrary to the misconceptions of many defensive close readers, as Ted Underwood puts it the binary is not a real choice or debate; Andrew Piper’s medial term “scalar […]

[…] 1A dataset for distant-reading literature in English, 1700-1922. | The Stone and the Shell […]

[…] Underwood, “A dataset for distant-reading literature in English, 1700-1922, (Links to an external site.)” tedunderwood.com (August 7, […]

[…] Underwood, “A dataset for distant-reading literature in English, 1700-1922, (Links to an external site.)” tedunderwood.com (August 7, […]

[…] Ted Underwood, “A dataset for distant-reading literature in English, 1700-1922.” […]