The pace of posts here has slowed, and it may stay pretty slow until I get some new data-slicing tools set up.

I spent the weekend trying to understand when I might want to use a vector space model to compare documents or terms, and when ordinary Pearson’s correlation would be better. Also, I now understand how Ward’s method of hierarchical agglomerative clustering is different from all the other methods.

Aside from the sheer fun of geekery, what I’ve learned is that the digital humanities have become *much* easier to enter than they were in the 90s. I attempted a bit of data-mining in the early 90s, and published an article containing a few graphs in Studies in Romanticism, but didn’t pursue the approach much further because I found it nearly impossible to produce the kind of results I wanted on the necessary scale. (You have to remember that my interests lean toward the large end of the scale continuum in DH.)

I told myself that I would get back in the game when the kinds of collections I needed began to become available, and in the last couple of years it became clear to me that they were, if not available, at least possible to construct. But I actually had no idea how transparent and accessible things have become. So much information is freely available on the web, and with tools like Zotero and SEASR the web is also becoming a medium in which one can do the work itself. Everything’s frickin interoperable. It’s so different from the 90s when you had to build things more or less from scratch yourself.

Month: January 2011

Over the next several years, I predict that we’re going to hear a lot of arguments about what the digital humanities can’t do. They can’t help us distinguish insightful and innovative works from merely typical productions of the press. They can’t help us make aesthetic judgments. They can’t help students develop a sense of what really matters about their individual lives, or about history.

Personally, I’m going to be thrilled. First of all, because Blake was right about many things, but above all about the humanities, when he wrote “Opposition is true Friendship.” The best way to get people to pay attention to the humanities is for us to have a big, lively argument about things that matter — indeed, I would go so far as to say that no humanistic project matters much until it gets attacked.

And critics of the digital humanities will be pointing to things that really do matter. We ought to be evaluating authors and works, and challenging students to make similar kinds of judgments. We ought to be insisting that students connect the humanities to their own lives, and develop a broader feeling for the comic and tragic dimensions of human history.

Of course, it’s not as though we’re doing much of that now. But if humanists’ resistance to the digitization of our profession causes us to take old bromides about the humanities more seriously, and give them real weight in the way we evaluate our work — then I’m all for it. I’ll sign up, in full seriousness, as a fan of the coming reaction against the digital humanities, which might even turn out to be more important than digital humanism itself.

I wouldn’t, after all, want every humanist to become a “digital humanist.” I believe there’s a lot we can learn from new modes of analysis, networking, and visualization, but I don’t believe the potential is infinite, or that new approaches ineluctably supplant old ones. The New York Times may have described data-intensive methods as an alternative to “theory,” but surely we’ve been trained to recognize a false dichotomy? “Theory” used to think it was an alternative to “humanism,” and that was wrong too.

I also predict that the furor will subside, in a decade or so, when scholars start to understand how new modes of analysis help them do things they presently want to do, but can’t. I’ve been thinking a lot about Benjamin Schmidt’s point that search engines are already a statistically sophisticated technology for assisted reading. Of course humanists use search engines to mine data every day, without needing to define a tf-idf score, and without getting so annoyed that they exclaim “Search engines will never help us properly appreciate an individual author’s sensibility!”

That’s the future I anticipate for the digital humanities. I don’t think we’re going to be making a lot of arguments that explicitly foreground a quantitative methodology. We’ll make a few. But more often text mining, or visualization, will function as heuristics that help us find and recognize significant patterns, which we explore in traditional humanistic ways. Once a heuristic like that is freely available and its uses are widely understood, you don’t need to make a big show of using it, any more than we now make a point of saying “I found these obscure sources by performing a clever keyword search on ECCO.” But it may still be true that the heuristic is permitting us to pursue different kinds of arguments, just as search engines are now probably permitting us to practice a different sort of historicism.

But once this becomes clear, we’ll start to agree with each other. Things will become boring again, and The New York Times will stop paying attention to us. So I plan to enjoy the argument while it lasts.

In a few years, some enterprising historian of science is going to write a history of the “culturomics” controversy, and it’s going to be fun to read. In some ways, the episode is a classic model of the social processes underlying the production of knowledge. Whenever someone creates a new method or tool (say, an air pump), and claims to produce knowledge with it, they run head-on into the problem that knowledge is social. If the tool is really new, their experience with it is by definition anomalous, and anomalous experiences — no matter how striking — never count as knowledge. They get dismissed as amusing curiosities.

The team that published in Science has attempted to address this social problem, as scientists usually do, by making their data public and carefully describing the conditions of their experiment. In this case, however, one runs into the special problem that the underlying texts are the private property of Google, and have been released only in a highly compressed form that strips out metadata. As Matt Jockers may have been the first to note, we don’t yet even have a bibliography of the contents of each corpus. Yesterday, in a new FAQ posted on culturomics.org (see section III.5), researchers acknowledged that they want to release such a bibliography, but haven’t yet received permission from Google to do it.

This is going to produce a very interesting deadlock. I’ve argued in many other posts that the Google dataset is invaluable, because its sheer scale allows us to grasp diachronic patterns that wouldn’t otherwise be visible. But without a list of titles, it’s going to be difficult to cite it as evidence. What I suspect may happen is that humanists will start relying on it in private to discover patterns, but then write those patterns up as if they had just been doing, you know, a bit of browsing in 500,000 books — much as we now use search engines quietly and without acknowledgment, although they in fact entail significant methodological choices. As Benjamin Schmidt has recently been arguing, search technology is based on statistical presuppositions more complex and specific than most people realize, presuppositions that humanists already “use all the time to, essentially, do a form of reading for them.”

A different solution, and the one I’ll try, is to use the Google dataset openly, but in conjunction with other smaller and more transparent collections. I’ll use the scope of the Google dataset to sketch broad contours of change, and then switch to a smaller archive in order to reach firmer and more detailed conclusions. But I still hope that Google can somehow be convinced to release a bibliography — at least of the works that are out of copyright — and I would urge humanists to keep lobbying them.

If some of the dilemmas surrounding this tool are classic history-of-science problems, others are specific to a culture clash between the humanities and the sciences. For instance, I’ve argued in the past that humanists need to develop a quantitative conception of error. We’re very talented at making the perfect the enemy of the good, but that simply isn’t how statistical knowledge works. As the newly-released FAQ points out, there’s a comparably high rate of error in fields like genomics.

On other topics, though, it may be necessary for scientists to learn a bit more about the way humanists think. For instance, one of the corpora included in the ngram viewer is labeled “English fiction.” Matt Jockers was the first to point out that this is potentially ambiguous. I assumed that it contained mostly novels and short stories, since that’s how we use the word in the humanities, but prompted by Matt’s skepticism, I wrote the culturomics team to inquire. Yesterday in the FAQ they answered my question, and it turns out that Matt’s skepticism was well founded.

Crucially, it’s not just actual works of fiction! The English fiction corpus contains some fiction and lots of fiction-associated work, like commentary and criticism. We created the fiction corpus as an experiment meant to explore the notion of creating a subject-specific corpus. We don’t actually use it in the main text of our paper because the experiment isn’t very far along. Even so, a thoughtful data analyst can do interesting things with this corpus, for instance by comparing it to the results for English as a whole.

Humanists are going to find that an eye-opening paragraph. This conception of fiction is radically different from the way we usually understand fiction — as a genre. Instead, the culturomics team has constructed a corpus based on fiction as a subject category; or perhaps it would be better to say that they have combined the two conceptions. I can say pretty confidently that no humanist will want to rely on the corpus of “English fiction” to make claims about fiction; it represents something new and anomalous.

On the other hand, I have to say that I’m personally grateful that the culturomics team made this corpus available — not because it tells me much about fiction, but because it tells me something about what happens when you try to hold “subject designations” constant across time instead of allowing the relative proportions of books in different subjects to fluctuate as they actually did in publishing history. I think they’re right that this is a useful point of comparison, although at the moment the corpus is labeled in a potentially misleading way.

In general, though, I’m going to use the main English corpus, which is easier to interpret. The lack of metadata is still a problem here, but this corpus seems to represent university library collections more fully than any other dataset I have access to. While sheer scale is a crude criterion of representativeness, for some questions it’s the useful one.

The long and short of it all is that the next few years are going to be a wild ride. I’m convinced that advances in digital humanities are reaching the point where they’re going to start allowing us to describe some large, fascinating, and until now largely invisible patterns. But at the moment, the biggest dataset — prominent in public imagination, but also genuinely useful — is curated by scientists, and by a private corporation that has not yet released full information about it. The stage is set for a conflict of considerable intensity and complexity.

Benjamin Schmidt has been posting some fascinating reflections on different ways of analyzing texts digitally and characterizing the affinities between them.

I’m tempted to briefly comment on a technique of his that I find very promising. This is something that I don’t yet have the tools to put into practice myself, and perhaps I shouldn’t comment until I do. But I’m just finding the technique too intriguing to resist speculating about what might be done with it.

Basically, Schmidt describes a way of mapping the relationships between terms in a particular archive. He starts with a word like “evolution,” identifies texts in his archive that use the word, and then uses tf-idf weighting to identify the other words that, statistically, do most to characterize those texts.

After iterating this process a few times, he has a list of something like 100 terms that are related to “evolution” in the sense that this whole group of terms tends, not just to occur in the same kinds of books, but to be statistically prominent in them. He then uses a range of different clustering algorithms to break this list into subsets. There is, for instance, one group of terms that’s clearly related to social applications of evolution, another that seems to be drawn from anatomy, and so on. Schmidt characterizes this as a process that maps different “discourses.” I’m particularly interested in his decision not to attempt topic modeling in the strict sense, because it echoes my own hesitation about that technique:

In the language of text analysis, of course, I’m drifting towards not discourses, but a simple form of topic modeling. But I’m trying to only submerge myself slowly into that pool, because I don’t know how well fully machine-categorized topics will help researchers who already know their fields. Generally, we’re interested in heavily supervised models on locally chosen groups of texts.

This makes a lot of sense to me. I’m not sure that I would want a tool that performed pure “topic modeling” from the ground up — because in a sense, the better that tool performed, the more it might replicate the implicit processing and clustering of a human reader, and I already have one of those.

Schmidt’s technique is interesting to me because the initial seed word gives it what you might call a bias, as well as a focus. The clusters he produces aren’t necessarily the same clusters that would emerge if you tried to map the latent topics of his whole archive from the ground up. Instead, he’s producing a map of the semantic space surrounding “evolution,” as seen from the perspective of that term. He offers this less as a finished product than as an example of a heuristic that humanists might use for any keyword that interested them, much in the way we’re now accustomed to using simple search strategies. Presumably it would also be possible to move from the semantic clusters he generates to a list of the documents they characterize.

I think this is a great idea, and I would add only that it could be adapted for a number of other purposes. Instead of starting with a particular seed word, you might start with a list of terms that happen to be prominent in a particular period or genre, and then use Schmidt’s technique of clustering based on tf-idf correlations to analyze the list. “Prominence” can be defined in a lot of different ways, but I’m particularly interested in words that display a similar profile of change across time.

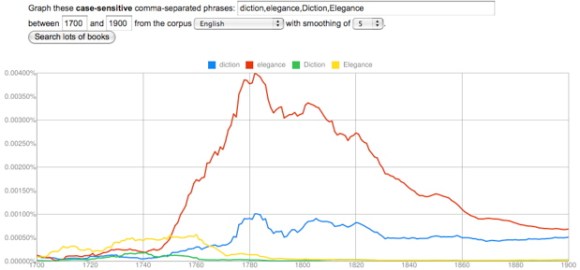

For instance, I think it’s potentially rather illuminating that “diction” and “elegance” change in closely correlated ways in the late eighteenth and early nineteenth century. It’s interesting that they peak at the same time, and I might even be willing to say that the dip they both display, in the radical decade of the 1790s, suggests that they had a similar kind of social significance. But of course there will be dozens of other terms (and perhaps thousands of phrases) that also correlate with this profile of change, and the Google dataset won’t do anything to tell us whether they actually occurred in the same sorts of books. This could be a case of unrelated genres that happened to have emerged at the same time.

But I think a list of chronologically correlated terms could tell you a lot if you then took it to an archive with metadata, where Schmidt’s technique of tf-idf clustering could be used to break the list apart into subsets of terms that actually did occur in the same groups of works. In effect this would be a kind of topic modeling, but it would be topic modeling combined with a filter that selects for a particular kind of historical “topicality” or timeliness. I think this might tell me a lot, for instance, about the social factors shaping the late-eighteenth-century vogue for characterizing writing based on its “diction” — a vogue that, incidentally, has a loose relationship to data mining itself.

I’m not sure whether other humanists would accept this kind of technique as evidence. Schmidt has some shrewd comments on the difference between data mining and assisted reading, and he’s right that humanists are usually going to prefer the latter. Plus, the same “bias” that makes a technique like this useful dispels any illusion that it is a purely objective or self-generating pattern. It’s clearly a tool used to slice an archive from a particular angle, for particular reasons.

But whether I could use it as evidence or not, a technique like this would be heuristically priceless: it would give me a way of identifying topics that peculiarly characterize a period — or perhaps even, as the dip in the 1790s hints, a particular impulse in that period — and I think it would often turn up patterns that are entirely unexpected. It might generate these patterns by looking for correlations between words, but it would then be fairly easy to turn lists of correlated words into lists of works, and investigate those in more traditionally humanistic ways.

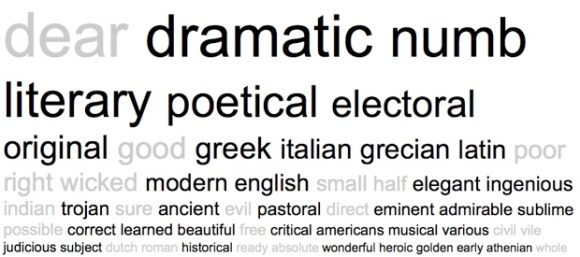

For instance, I had no idea that “diction” would correlate with “elegance” until I stumbled on the connection, but having played around with the terms a bit in MONK, I’m already getting a sense that the terms are related not just through literary criticism (as you might expect), but also through historical discourse and (oddly) discourse about the physiology of sensation. I don’t have a tool yet that can really perform Schmidt’s sort of tf-idf clustering, but just to leave you with a sense of the interesting patterns I’m glimpsing, here’s a word cloud I generated in MONK by contrasting eighteenth-century works that contain “elegance” to the larger reference set of all eighteenth-century works. The cloud is based on Dunning’s log likelihood, and limited to adjectives, frankly, just because they’re easier to interpret at first glance.

There’s a pretty clear contrast here between aesthetic and moral discourse, which is interesting to begin with. But it’s also a bit interesting that the emphasis on aesthetics extends into physiological terms like “sensorial,” “irritative,” and “numb,” and historical terms like “Greek” and “Latin.” Moreover, many of the same terms reoccur if you pursue the same strategy with “diction.”

A lot of words here are predictably literary, but again you see sensory terms like “numb,” and historical ones like “Greek,” “Latin,” and “historical” itself. Once again, moreover, moral discourse is interestingly underrepresented. This is actually just one piece of the larger pattern you might generate if you pursued Schmidt’s clustering strategy — plus, Dunning’s is not the same thing as tf-idf clustering, and the MONK corpus of 1000 eighteenth-century works is smaller than one would wish — but the patterns I’m glimpsing are interesting enough to suggest to me that this general kind of approach could tell me a lot of things I don’t yet know about a period.