This will be an old-fashioned, shamelessly opinionated, 1000-word blog post.

Anyone who has tried to write literary history using numbers knows that they are a double-edged sword. On the one hand they make it possible, not only to consider more examples, but often to trace subtler, looser patterns than we could trace by hand.

On the other hand, quantitative methods are inherently complex, and unfamiliar for humanists. So it’s easy to bog down in preambles about method.

Social scientists talking about access to healthcare may get away with being slightly dull. But literary criticism has little reason to exist unless it’s interesting; if it bogs down in a methodological preamble, it’s already dead. Some people call the cause of death “positivism,” but only because that sounds more official than “boredom.”

This is a rhetorical rather than epistemological problem, and it needs a rhetorical solution. For instance, Matthew Wilkens and Cameron Blevins have rightly been praised for focusing on historical questions, moving methods to an appendix if necessary. You may also recall that a book titled Distant Reading recently won a book award in the US. Clearly, distant reading can be interesting, even exciting, when writers are able to keep it simple. That requires resisting several temptations.

One temptation to complexity is technical, of course: writers who want to reach a broad audience need to resist geeking out over the latest algorithm. Perhaps fewer people recognize that the advice of more traditional colleagues can be another source of temptation. Scholars who haven’t used computational methods rarely understand the rhetorical challenges that confront distant readers. They worry that our articles won’t be messy enough — bless their hearts — so they advise us to multiply close readings, use special humanistic visualizations, add editorial apparatus to the corpus, and scatter nuances liberally over everything.

Some parts of this advice are useful: a crisp, pointed close reading can be a jolt of energy. And Katherine Bode is right that, in 2016, scholars should share data. But a lot of the extra knobs and nuances that colleagues suggest adding are gimcrack notions that would aggravate the real problem we face: complexity.

Consider the common advice that distant readers should address questions about the representativeness of their corpora by weighting all the volumes to reflect their relative cultural importance. A lot of otherwise smart people have recommended this. But as far as I know, no one ever does it. The people who recommend it, don’t do it themselves, because a moment’s thought reveals that weighting volumes can only multiply dubious assumptions. Personally, I suspect that all quests for the One Truly Representative Corpus are a mug’s game. People who worry about representativeness are better advised to consult several differently-selected samples. That sometimes reveals confounding variables — but just as often reveals that selection practices make little difference for the long-term history of the variable you’re tracing. (The differences between canon and archive are not always as large, or as central to this project, as Franco Moretti initially assumed.)

Another tempting piece of advice comes from colleagues who invite distant readers to prove humanistic credentials by adding complexity to their data models. This suggestion draws moral authority from a long-standing belief that computers force everything to be a one or a zero, whereas human beings are naturally at home in paradox. That’s why Captain Kirk could easily destroy alien robots by confronting them with the Liar’s Paradox. “How can? It be X. But also? Not X. Does not compute. <Smell of frying circuitry>.”

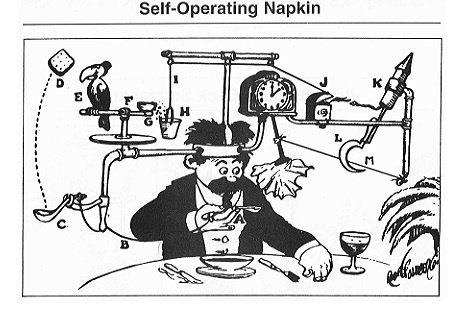

Maybe in 1967 it was true that computers could only handle exclusive binary categories. I don’t know: I was divided into a pair of gametes at the time myself. But nowadays data models can be as complex as you like. Go ahead, add another column to the database. No technical limit will stop you. Make categories perspectival, by linking each tag to a specific observer. Allow contradictory tags. If you’re still worried that things are too simple, make each variable a probability distribution. The computer won’t break a sweat, although your data model may look like the illustration below to human readers.

I just wrote an article, for instance, where I consider eighteen different sources of testimony about genre — each of which models a genre in ways that can implicitly conflict with, or overlap with, other definitions. I trust you can see the danger: it’s not that the argument will be too reductive. I was willing to run a risk of complexity in this case because I was tired of being told that computers force everything into binaries. Machine learning is actually good at eschewing fixed categories to tease out loose family resemblances; it can be every bit as perspectival, multifaceted, and blurry as you wanna be.

I hope my article manages to remain lively, but I think readers will discover, by the end, that it could have succeeded with a simpler data model. When I rework it for the book version, I may do some streamlining.

It’s okay to simplify the world in order to investigate a specific question. That’s what smart qualitative scholars do themselves, when they’re not busy giving impractical advice to their quantitative friends. Max Weber and Hannah Arendt didn’t make an impact on their respective fields — or on public life — by adding the maximum amount of nuance to everything, so their models could represent every aspect of reality at once, and also function as self-operating napkins.

Because distant readers use larger corpora and more explicit data models than is usual for literary study, critics of the field (internal as well as external) have a tendency to push on those visible innovations, asking “What is still left out?” Something will always be left out, but I don’t think distant reading needs to be pushed toward even more complexity and completism. Computers make those things only too easy. Instead distant readers need to struggle to retain the vividness and polemical verve of the best literary criticism, and the “combination of simplicity and strength” that characterizes useful social theory.