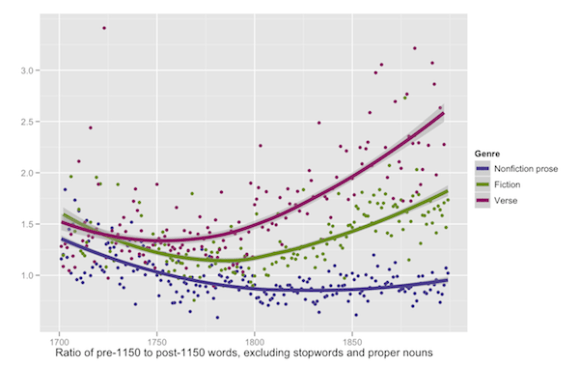

I’m having an interesting discussion with Lisa Rhody about the significance of topic modeling at different scales that I’d like to follow up with some examples.

I’ve been doing topic modeling on collections of eighteenth- and nineteenth-century volumes, using volumes themselves as the “documents” being modeled. Lisa has been pursuing topic modeling on a collection of poems, using individual poems as the documents being modeled.

The math we’re using is probably similar. I believe Lisa is using MALLET. I’m using a version of Latent Dirichlet Allocation that I wrote in Java so I could tinker with it.

But the interesting question we’re exploring is this: How does the meaning of LDA change when it’s applied to writing at different scales of granularity? Lisa’s documents (poems) are a typical size for LDA: this technique is often applied to identify topics in newspaper articles, for instance. This is a scale that seems roughly in keeping with the meaning of the word “topic.” We often assume that the topic of written discourse changes from paragraph to paragraph, “topic sentence” to “topic sentence.”

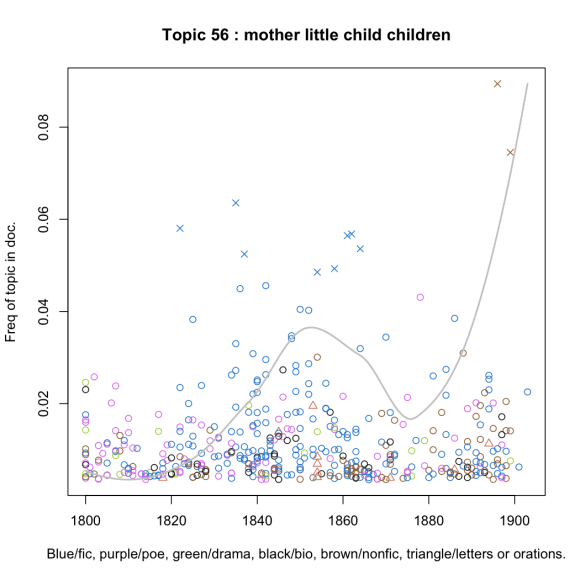

By contrast, I’m using documents (volumes) that are much larger than a paragraph, so how is it possible to produce topics as narrowly defined as this one?

This is based on a generically diverse collection of 1,782 19c volumes, not all of which are plotted here (only the volumes where the topic is most prominent are plotted; the gray line represents an aggregate frequency including unplotted volumes). The most prominent words in this topic are “mother, little, child, children, old, father, poor, boy, young, family.” It’s clearly a topic about familial relationships, and more specifically about parent-child relationships. But there aren’t a whole lot of books in my collection specifically about parent-child relationships! True, the most prominent books in the topic are A. F. Chamberlain’s The Child and Childhood in Folk Thought (1896) and Alice Earl Morse’s Child Life in Colonial Days (1899), but most of the rest of the prominent volumes are novels — by, for instance, Catharine Sedgwick, William Thackeray, Louisa May Alcott, and so on. Since few novels are exclusively about parent-child relations, how can the differences between novels help LDA identify this topic?

The answer is that the LDA algorithm doesn’t demand anything remotely like a one-to-one relationship between documents and topics. LDA uses the differences between documents to distinguish topics — but not by establishing a one-to-one mapping. On the contrary, every document contains a bit of every topic, although it contains them in different proportions. The numerical variation of topic proportions between documents provides a kind of mathematical leverage that distinguishes topics from each other.

The implication of this is that your documents can be considerably larger than the kind of granularity you’re trying to model. As long as the documents are small enough that the proportions between topics vary significantly from one document to the next, you’ll get the leverage you need to discriminate those topics. Thus you can model a collection of volumes and get topics that are not mere “subject classifications” for volumes.

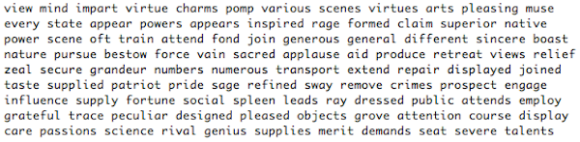

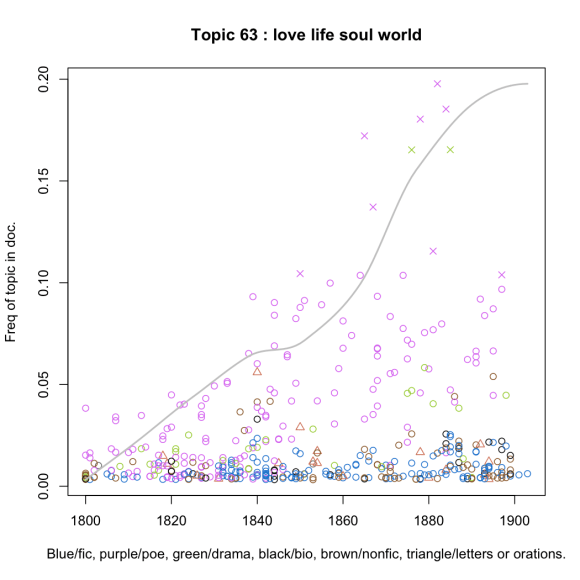

Now, in the comments to an earlier post I also said that I thought “topic” was not always the right word to use for the categories that are produced by topic modeling. I suggested that “discourse” might be better, because topics are not always unified semantically. This is a place where Lisa starts to question my methodology a little, and I don’t blame her for doing so; I’m making a claim that runs against the grain of a lot of existing discussion about “topic modeling.” The computer scientists who invented this technique certainly thought they were designing it to identify semantically coherent “topics.” If I’m not doing that, then, frankly, am I using it right? Let’s consider this example:

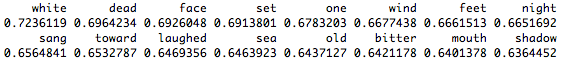

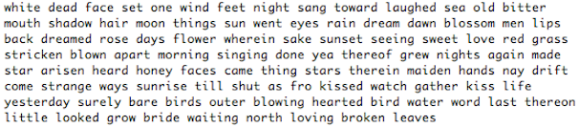

This is based on the same generically diverse 19c collection. The most prominent words are “love, life, soul, world, god, death, things, heart, men, man, us, earth.” Now, I would not call that a semantically coherent topic. There is some religious language in there, but it’s not about religion as such. “Love” and “heart” are mixed in there; so are “men” and “man,” “world” and “earth.” It’s clearly a kind of poetic diction (as you can tell from the color of the little circles), and one that increases in prominence as the nineteenth century goes on. But you would be hard pressed to identify this topic with a single concept.

Does that mean topic modeling isn’t working well here? Does it mean that I should fix the system so that it would produce topics that are easier to label with a single concept? Or does it mean that LDA is telling me something interesting about Victorian poetry — something that might be roughly outlined as an emergent discourse of “spiritual earnestness” and “self-conscious simplicity”? It’s an open question, but I lean toward the latter alternative. (By the way, the writers most prominently involved here include Christina Rossetti, Algernon Swinburne, and both Brownings.)

In an earlier comment I implied that the choice between “semantic” topics and “discourses” might be aligned with topic modeling at different scales, but I’m not really sure that’s true. I’m sure that the document size we choose does affect the level of granularity we’re modeling, but I’m not sure how radically it affects it. (I believe Matt Jockers has done some systematic work on that question, but I’ll also be interested to see the results Lisa gets when she models differences between poems.)

I actually suspect that the topics identified by LDA probably always have the character of “discourses.” They are, technically, “kinds of language that tend to occur in the same discursive contexts.” But a “kind of language” may or may not really be a “topic.” I suspect you’re always going to get things like “art hath thy thou,” which are better called a “register” or a “sociolect” than they are a “topic.” For me, this is not a problem to be fixed. After all, if I really want to identify topics, I can open a thesaurus. The great thing about topic modeling is that it maps the actual discursive contours of a collection, which may or may not line up with “concepts” any writer ever consciously held in mind.

Computer scientists don’t understand the technique that way.* But on this point, I think we literary scholars have something to teach them.

On the collective course blog for English 581 I have some other examples of topics produced at a volume level.

*[UPDATE April 3, 2012: Allen Riddell rightly points out in the comments below that Blei’s original LDA article is elegantly agnostic about the significance of the “topics” — which are at bottom just “latent variables.” The word “topic” may be misleading, but computer scientists themselves are often quite careful about interpretation.]

Documentation / open data:

I’ve put the topic model I used to produce these visualizations on github. It’s in the subfolder 19th150topics under folder BrowseLDA. Each folder contains an R script that you run; it then prompts you to load the data files included in the same folder, and allows you to browse around in the topic model, visualizing each topic as you go.

I have also pushed my Java code for LDA up to github. But really, most people are better off with MALLET, which is infinitely faster and has hyperparameter optimization that I haven’t added yet. I wrote this just so that I would be able to see all the moving parts and understand how they worked.