Although distant reading always involves work, some parts of the task have an immediate reward. Solving these problems gives you an article with clear disciplinary significance, or at least a sparkly website. You could call these problems the glamorous ones.

Other problems are just nasty. They require a lot of detail work that never sees the light of day, and when you’re done, all you have is an intermediate product that makes further inquiry possible. The research community as a whole benefits, but you don’t get your picture taken with the Mayor. At most, if you’re lucky, you get a couple of data or code citations.

Perspectives vary, of course: problems that look nasty to literary scholars might be glamorous in information science. But untenured scholars understandably want problems that count as glamorous in their home disciplines. Even post-tenure, I only do thankless work in the dead of night if I can get help and/or grant funding, because I’m not a masked billionaire vigilante. For the last several years, I’ve been collaborating with HathiTrust Research Center to create a public dataset of word counts for English-language literature 1800-2000, and we’re only halfway done with that slightly nasty task.

So I’m not volunteering to tackle any of the three problems that follow. I’m just putting up a bat signal, in case there’s a weary private detective out there who might be the heroine Gotham City needs right now.

So I’m not volunteering to tackle any of the three problems that follow. I’m just putting up a bat signal, in case there’s a weary private detective out there who might be the heroine Gotham City needs right now.

1: Proving how much ngram information is safe to share.

In order to do research beyond the wall of copyright, we often need to share derived data about books, instead of the original text. The question is, how much information can you share, legally, before it becomes possible to reconstruct the book?

There’s already some good research on this problem, and it might be 90% solved. But it’s a Rasputin-like problem that will keep coming back to life if you only kill it 90% dead. We need someone to bury it, which probably means, someone with real CS training, who can produce a specific, confident answer you could show a lawyer. In particular, there are tricky questions about interactions between different levels of aggregation. Suppose (for instance) you had page-level counts of single words, plus volume-level counts of trigrams. How much of a book, at most, could you reconstruct, given real-life variations in book length and page length?

2: Date-of-first-publication metadata.

We’re beginning to assemble large literary collections. But some of them include a lot of reprints, published decades or centuries after the first edition. That’s not necessarily a bad thing, but we will probably also want datasets that are deduplicated, or limited to first editions.

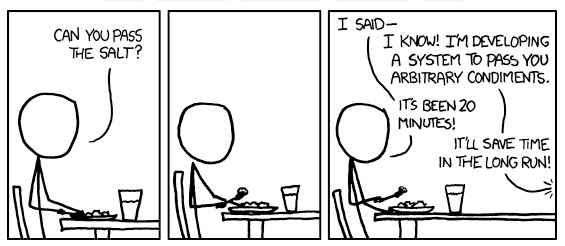

The beautiful, general solution to this problem probably requires linked data, and FRBR protocols, and a great deal of discussion. But see xkcd on solutions to “general problems.”

The solution Gotham City needs right now is probably more like a list of 50,000 titles, associated with dates of first publication.

This may be the next nasty problem I tackle. I suspect it would benefit from a tiered solution, where you produce a small amount of hand-checked data, plus a large amount of scraped or algorithmically-guessed data with a lower level of confidence.

3: A half-decent eighteenth century collection.

OCR problems before 1800 are real, but the universe of English literature before 1800 is also small enough that it’s possible to imagine creating a reasonable sample of hand-corrected texts. The eMOP crowd-sourcing initiative may be the solution here. Or a very modest supplement to ECCO-TCP might be sufficient. I don’t know; I understand nineteenth-century digital collections much better.

***

A post like this one may seem to be encouraging people, generally, to tackle more nasty problems, but that’s not what I intend. Actually, I think scholars who work with computers tend to have a temperament that makes us all too willing to work on unglamorous infrastructure & markup problems, in the faith that they will eventually produce a general solution useful to everyone.

Fields that aren’t yet securely central to a discipline sometimes need to emphasize shorter-term thinking. But maybe distant reading is getting secure enough that a few of us can afford to do vigilante work on the side. Or maybe there are computer scientists out there who just need to see a bat signal.

Feel free to suggest more nasty problems in the comments.