[Used to have a more boring title still preserved in the URL. -Ed.] In general I’m deeply optimistic about the potential for dialogue between the humanities and quantitative disciplines. I think there’s a lot we can learn from each other, and I don’t think the humanities need any firewall to preserve their humanistic character.

But there is one place where I’m coming to agree with people who say that quantitative methods can make us dumber. To put it simply: numbers tend to distract the eye. If you quantify part of your argument, critics (including your own internal critic) will tend to focus on problems in the numbers, and ignore the deeper problems located elsewhere.

I’ve discovered this in my own practice. For instance, when I blogged about genre in large digital collections. I got a lot of useful feedback on those blog posts; it was probably the most productive conversation I’ve ever had as a scholar. But most of the feedback focused on potential problems in the quantitative dimension of my argument. E.g., how representative was this collection as a sample of print culture? Or, what smoothing strategies should I be using to plot results? My own critical energies were focused on similar questions.

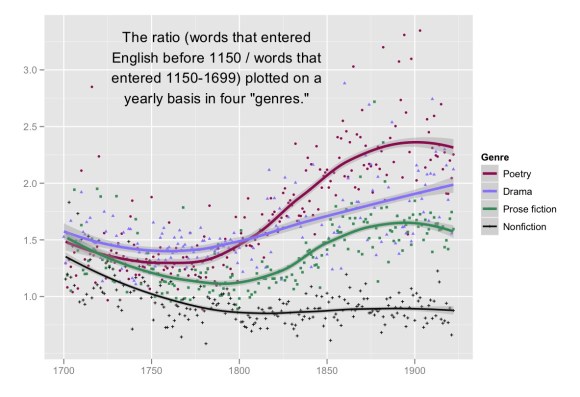

Those questions were useful, and improved the project greatly, but in most cases they didn’t rock its foundations. And with a year’s perspective, I’ve come to recognize that there were after all foundation-rocking questions to be posed. For instance, in early versions of this project, I hadn’t really ironed out the boundary between “poetry” and “drama.” Those categories overlap, after all! This wasn’t creating quantitative problems (Jordan Sellers and I were handling cases consistently), but it was creating conceptual ones: the line “poetry” below should probably be labeled “nondramatic verse.”

Skepticism about foundational concepts has been one of the great strengths of the humanities. The fact that we have a word for something (say genre or the individual) doesn’t necessarily imply that any corresponding entity exists in reality. Humanists call this mistake “reification,” and we should hold onto our skepticism about it. If I hand you a twenty-page argument using Google ngrams to prove that the individual has been losing ground to society over the last hundred years, your response should not be “yeah, but how representative is Google Books, and how good is their OCR?” (Those problems are relatively easy to solve.) Your response should be, “Uh … how do you distinguish ‘the individual’ from ‘society’ again?”

As I said, humanists have been good at catching reification; it’s a strength we should celebrate. But I don’t see this habit of skepticism as an endangered humanistic specialty that needs to be protected by a firewall. On the contrary, we should be exporting our skepticism! This habit of questioning foundational concepts can be just as useful in the sciences and social sciences, where quantitative methods similarly distract researchers from more fundamental problems. [I don’t mean to suggest that it’s never occurred to scientists to resist this distraction: as Matt Wilkens points out in the comments, they’re often good at it. -Ed.]

In psychology, for instance, emphasis on clearing a threshold of statistical significance (defined as a p-value) frequently distracts researchers from more fundamental questions of experimental design (like, are we attempting to measure an entity that actually exists?) Andrew Gelman persuasively suggests that this is not just a problem caused by quantification but can be more broadly conceived as a “dangerous lure of certainty.” In any field, it can be tempting to focus narrowly on the degree of certainty associated with a hypothesis. But it’s often more important to ask whether the underlying question is interesting and meaningfully framed.

On the other hand, this doesn’t mean that humanists need to postpone quantitative research until we know how to define long-debated concepts. I’m now pretty skeptical about the coherence of this word genre, for instance, but it’s a skepticism I reached precisely by attempting to iron out details in a quantitative model. Questions about accuracy can prompt deeper conceptual questions, which reframe questions of accuracy, in a virtuous cycle. The important thing, I think, is not to let yourself stall out on the “accuracy” part of the cycle: it offers a tempting illusion of perfectibility, but that’s not actually our goal.

Postscript: Scott Weingart conveys the point I’m trying to make in a nicely compressed way by saying that it flips the conventional worry that the mere act of quantification will produce unearned trust. In academia, the problem is more often inverse: we’re so strongly motivated to criticize numbers that we forget to be skeptical about everything else.

19 replies on “One way numbers can after all make us dumber.”

Well put, Ted. And I agree that quantitative methods — or the attempt to develop them — help us think about concepts that are otherwise easy to take for granted. I’ve seen that in my own work with allegory.

I wonder a little bit, though, about the larger claim that the humanities have any special purchase on skepticism. I guess I’d say that we tend to think about big, amorphous problems, so maybe we see more way-off-base approaches and are therefore somewhat more attuned to that possibility. But aren’t we really just talking about doing good academic work? Question your assumptions, contemplate the possibility that you could be coming at things from the wrong angle, etc? Wouldn’t good chemists or psychologists or political scientists do the same thing?

It’s not that I think the humanities are bad at this (or anything else, really). It’s just that I’m not convinced in the end that there’s any uniquely “humanities” approach to knowledge. I’d say that there are humanities *objects* of knowledge and that those objects can reward some methods more than others. But to me, skepticism indicates an orientation within epistemology itself.

I say all this because I’m a little put off not by anything you said, but by the larger conversation about preserving the humanities. I hear a lot of noble sentiments about how the humanities should be supported because they (alone, I think, is the implication) foster critical thinking, skepticism, analysis, etc. And all I can think is that it must be news to people in other disciplines that they’re not interested in (or good at) those things, and that it mostly just makes us seem ignorant for suggesting as much.

I guess I’d say there are things to export from the humanities, but those things are methods for dealing with classes of objects rather than any epistemic outlook.

I think I mostly agree with this, Matt. I also tend to be put off by the kind of idealization of the humanities you describe. And I would firmly agree that there’s no uniquely “humanistic” approach to knowledge; that’s exactly what I mean to imply when I say we don’t need any firewalls.

The post does probably overstate how much of a comparative advantage humanists have at questioning foundational concepts. But the overstatement is a slip due to rhetorical compression more than a settled opinion. I don’t actually think resistance to reification is unique to the humanities. I know that it sounds that way when I talk about “exporting” it, but of course lots of scientific disciplines are very thoughtful about their core concepts. I know that psychologists question whether there’s actually an entity called “personality,” etc. In the end I’m less interested in the import/export business than in acknowledging a broader tension between critiques of accuracy and critiques of conceptual framing, which could play out in any field.

I do think that humanists are especially good at resisting one kind of reification, which is the assumption that we can operate with concepts as if they had a constant meaning across the time axis. There, I think, the historical character of our disciplines makes us good at recognizing instabilities that other fields often gloss over. (Ironically, I just wrote a book arguing that we sometimes take this emphasis on historical discontinuity too far — but that’s not a contradiction, it’s … um, a dialectic.)

Ah, on this I agree entirely. And I’m really, really interested in problems of historical continuity/discontinuity. I have the Kindle sample of your book on my iPod and am looking forward to reading the whole thing. My own manuscript is about the mechanics of periodization, but/and a lot of the computational work I’ve seen makes me think that the story is even more complicated than the version I’ve worked out in the past.

Oh well … next book.

Great post! It’s coming from a quite different place, but you might enjoy Jenn Lena’s work on genre in music. Her argument is that genre isn’t a coherent set of elements in the work, but rather a way of organizing communities of producers and consumers. I’m not sure how well it’d apply outside of the context of 20th and 21st century music, but might still be of interest.

Thanks, Dan! I’ll take a look at that. There are similar socially-based theories of genre in rhetorical theory: Carolyn Miller and Amy Devitt are especially good. I think recognizing genre as a social phenomenon is a helpful first step, though it may still turn out that we’re talking about social phenomena of bewilderingly different sorts.

Yes! I completely agree and am pleased you raise this point. I’m regularly troubled by how digital humanists deploy genre as though it were either a set of stable taxonomies or merely a box into which we can classify texts. Instead, genres possess structures that do ideological and hermeneutic work. It’s good for me that so few people investigate genre because it’s the topic of my dissertation, but it’s troubling nonetheless (and not at all limited to DH work; it’s endemic in literary scholarship). I’ve found the work of George Lakoff (e.g., Women, Fire, and Dangerous Things) and other cognitive scientists on how the human mind categorizes to be invaluable.

Thanks! I’ll check out Lakoff and also keep an eye peeled for your work on genre. I’m doing a lot of work with classification algorithms, but I think if you listen to the algorithms they actually end up telling you that genre isn’t a stable taxonomy.

Great post, Ted. I find the issues around genre interesting too. My doctoral thesis identified a new ‘mode’ of writing in New Zealand, so I’ve struggled with the issue myself. One of my supervisors felt it was a coherent enough body of writing to be described as a ‘genre’ but I wasn’t comfortable with that approach, because I could see various corner cases where the strong argument just didn’t work. I ended up adapting Northrop Frye’s ‘theory of modes’. I like the way it allows for variation across bodies of texts, and accepts variations in their socio-cultural function over time as well.

This is exactly the issue I struggle with. I feel like the concepts of “form,” “genre,” and “mode” shade into each other fairly smoothly. E.g., a concept like “the gothic” can be divided up in a wide range of different ways, some of which look more like genres (imperial gothic) and some more like modes (gothic romance). On the other hand, our ways of thinking about poetic genre often have a strongly formal character. Not to mention that some genres are a mosaic of other genres — e.g. “the ballad opera” or “life-and-letters.” It’s dizzying.

But I actually think this is part of the rationale for a digital approach to the phenomenon. Numbers are very useful when you’re dealing with continuous questions of degree. E.g., instead of deciding whether “Southern gothic” is a genre or a mode we might situate it somewhere on that spectrum.

Actually, your post reminded me of Frye, but on checking the few pages on my thesis that directly deal with the issue, I remember Alistair Fowler’s book Kinds of Literature: An Introduction to the Theory of Genres and Modes helped more. I like his notion that modal terms never imply a complete external form; they represent a mixture of various generic elements and are often amorphous and transitory in historical terms. It’d be interesting if you could collapse quantification to that level – of modal attributes – and use the results to critique the value of more rigid forms of generic classification. The relationships between genres and modes could prove fascinating, especially if you could chart their development over time. How you would define and code identifiable modal attributes I don’t know.

What I’ve found so far in practice is that forms and genres are pretty easy to identify digitally with bag-of-words methods, and things I would tend to call modes are more challenging. So, e.g. dramatic verse, the Robinsonade, and the Romantic-era gothic novel are things I can train a single classifier to identify. But if I want to identify “the gothic” in a broader sense, I find I can’t do that very well with a single classifier. I would need to train multiple models spread out over the timeline to model historical variability within the mode. But I’ll check out Alistair Fowler’s book; I haven’t read it and it looks useful.

Yes: “Genre

may beIS a box we’ve inherited for a whole lot of basically different things.”I thought about this kind of thing a lot when I began thinking about cultural evolution. There I wanted to liken the biological concept of species with cultural notions of genre in literature style in music. I didn’t get very far, but thinking about the biological side proved useful.

What I learned is that we need to think about the relation between classification and causal mechanisms. Until relatively recently biological classification was based exclusively on morphology: creatures that look like one another are assumed to be the same kind of thing. So we group individuals into species, species into genera, genera into families, etc. This worked fairly well though, as always, there were rough spots.

Now, let’s add evolution to this picture. Let’s think of Darwinian evolution is a theory about differential survival based on the inheritance of characteristics from one generation to the next. This is a causal process. And it turns out that this process maps rather neatly onto morphological classification schemes (see section 4, Biological Evolution: Taxonomy Recapitulates Phylogeny, Almost, of Culture as an Evolutionary Arena. Individuals of the same species share the same gene pool. And species in the same genus also share a gene pool. And so it is on up the line.

Thus we have it that us humans share 98% of our genes with chimpanzees. To be sure, we’re not the same species, nor even the same genus. But we ARE closely related. Not only that, be we share a bunch of genes with yeast as well (I forget the percentage). Why? Well, we’re both living, aren’t we? (It’s more specific than that, but you get the idea.)

This “fit” between morphological classification and inheritance of genes works best in the multi-celled creatures and, among them, it’s best with animals. Animals don’t often form hybrids. Yes, you can cross a horse with a donkey and get a mule; but mules tend not to breed. Hybridization is much more common among plants.

The thing about hybridization is that it messes up our nice correspondence between classification by morphology and the inter-generational flow of genetic material. A hybrid creature has parents of two different species. Whoops! There goes the neighborhood. Two different gene pools now come together in a hybrid offspring.

And things get a lot worse when we look at single-celled organisms, where so-called horizontal gene transfer is rife. Here there’s even some doubt as to whether the species concept applies. I’ve seen talk of quasi-species. The relationship between morphology and inheritance has become very muddy.

[I should here note that my knowledge of biology is sketchy. This is very technical stuff, and quite controversial. Still…]

Now, one thing biologists have had to do is give up the notion that a species is some kind of essence, an essence that can be usefully characterized by a description. A species is more usefully thought of as a population of individuals, or a gene pool (John Wilkins tells me biologists are working with 22 notions of species). And those are not essences.

The important point, as I said, is that we have classification on the one hand, and we have causal processes on the other. There was a time when biologists could think of morphological classification as being neatly aligned with the causal process of genetic inheritance. But things are no longer that neat.

And it’s pretty much of a mess when we think of culture. Historical linguists have a fairly elaborate classification of the world’s languages. The classification is tree-like and is taken to be evidence of descent. The classifiction draws most heavily on phonology, and perhaps some syntactic features as well. At the same time we know that horizontal transfer from one language to another is common; words travel freely across linguistic borders. Let’s let that go. There is a logic to this process.

What of creoles? From Culture as a Evolutionary Arena:

What of literary genre? Who knows? One causal process is that of a writer producing a text. That writer can potentially draw on anything and everything to do so. Once the writer has produced the text, it’s put into circulation. That brings in another process. And, of course, chances are the writer has taken the need for circulation into account in the production.

Still, the process isn’t nearly so physically constrained as biological reproduction, and that’s pretty messy in single-celled organisms.

I don’t see how anything neat and clean can be pulled out of the notion of genre. Literary process is too various and messy. It seems to me we’ve got to ask: what do we want from this act of classification? What kind of causal process are we trying to uncover? And we’re going to have to give up on coming up with a classification process that reveals the “essence” of texts.

Wow, Bill — there’s a lot here, and it’s fascinating. I haven’t explored the evolutionary analogy because it does seem fraught with problems like the one you describe. Still unclear to me what would count as “descent” or “selection” in this domain.

And I agree entirely with your observations about genre in the last paragraph. What I like about a digital approach to genre, actually, is that it doesn’t presuppose stable categories, and can be entirely provisional. Want to frame the categories differently? That’s fine, just change the training examples and hit “run” a second time. You can’t do that if you’re classifying 469,000 volumes by hand.

But the word “classification” itself may be less than ideal, because for most laypeople it’s going to call up visions of an underlying taxonomic order, and as you rightly say, that can’t really be our goal. The categories we design are going to be provisional boundaries designed to support specific kinds of inquiry. Instead of “classification,” the better general term might be “predictive modeling.”

I explored the biological analogy, Ted, because I have a long-standing committment to the notion that cultural change is an evolutionary process. I looked at biology, not with the expectation that I’d find models that I could appropriate ore or less as-is, but simply to learn something about evolution in a domain where a lot of the details are being worked out. At the same time, lots of those details are in dispute. Surprise! Surprise!

I’d say that the main thing I got out of writing that paper, Culture as an Evolutionary Arena, is a better understanding of the relationship between biological classification and the process of evolution. I went into it knowing that, of course, there is some relationship. After all, traditionally biologists have determined whether or not individuals are of the same species, or whether species are related through descent, on the basis of descriptions yielding classifications. In the process of writing that paper I started drawing diagrams (included in the paper of course) illustration the relationship between classification and descent. And drawing those diagrams proved to be just a little tricky and very useful. Nothing deep, but useful.

As for “descent” and “selection” in the literary domain I’d say that to a first approximation author determine descent and readers do selection. That is, authors determine what pre-existing stuff – words, tropes, character types, plot elements, over all plot schemes, etc. – what gets into a text. Readers figure out which texts will have an active presence in the culture by their choice of texts to read.

Which doesn’t tell you much about anything in your collections. The thing about the sort of work you do is that an obscure text read by only a few readers has the same “wighting” as a popular text read by 100s of thousands. And to work out evolutionary relationships you need to keep track of dates, which can of course be done. But I wouldn’t think that’s a high priority at the moment.

Still, geneticists look at piles of genes and, in topic analysis, you’re looking at piles of words. They’re intereted in what genes “hang” together in yielding phenotypic traits and you’re interested in simply identifying groups of words that hang together in context. So there’s crude similarities.

And you’re right that the word “classification” is, for most people, scholars included, “going to call up visions of an underlying taxonomic order.” On the other hand “predictive modeling” isn’t going to call up anything at all. It’s a sophisticated concept embedded a conceptual matrix that’s quite different from existing humanistic conceptual schemes. It calls for theorizing of a different kind.

That, it seems to me, is where we are. The old concepts aren’t working. The new ones haven’t been created.

I find it interesting that Frye’s Anatomy of Criticism is being invoked in this discussion. That book’s over a half century old; it came out the same year, 1957, that Chomsky’s Syntactic Structures came out. I own Fowler’s Kinds of Literature and I read around in it; but I don’t remember it at all. My impression is that he’s playing in pretty much the same conceptual universe that Frye was in, and that universe just doesn’t have the tools we need.

A final note. When I was working on my music book (Beethoven’s Anvil) I came across at least two charts (I don’t have any links readily at hand) depicting relationships among styles of popular music in 20th Century America. Neither chart took the form of a tree. Both showed the music riddled with stylistic convergences and divergences. I don’t think either of those charts were based on a rigorous methodology. They were prepared by people who’d listened to a lot of music and knew a lot of the history. The obvious thing to do in this era of “big data” is to digitize all the recordings and then search them for bundles of features that hang together, bundles that break up, and so forth. Chart the history THAT way. I guess that in order to get something like that going you might want to “seed” it with the relationship set forth in those informal charts.

Thanks! I definitely didn’t mean to argue against biological analogies; just noting that the analogy would be complex, and I haven’t looked into it deeply.

The experiment you propose in your last paragraph wouldn’t be easy, but in principle it makes sense. The “seeding” process might be analogous to “semi-supervised learning.”

[…] administrative time at the beginning of the semester, I’ve been catching up on my reading. Ted Underwood has an interesting post on the relationship between data and genre as he investigates genre through […]

> the line “poetry” below should probably be labeled “nondramatic verse.”

I’d suggest you split drama into verse-drama and non-verse-drama instead. It would better reflect the traditional generic distinction, and would differentiate feature (verse/nonverse) from genre (poetry/drama) more accurately, since there’s plenty of non-verse-drama in your period.

I definitely plan to make subdivisions within drama as well. But even the categories of “verse” and “prose drama” overlap in interesting ways. E.g., “ballad operas” tend to mix verse and prose (heavy on the prose), as do lots of verse tragedies (heavier on the verse). The layers of potential complexity never stop with this stuff.

[…] One way numbers can after all make us dumber. […]