If the Internet is good for anything, it’s good for speeding up the Ent-like conversation between articles, to make that rumble more perceptible by human ears. I thought I might help the process along by summarizing the Stanford Literary Lab’s latest pamphlet — a single-authored piece by Franco Moretti, “‘Operationalizing’: or the function of measurement in modern literary theory.”

One of the many strengths of Moretti’s writing is a willingness to dramatize his own learning process. This pamphlet situates itself as a twist in the ongoing evolution of “computational criticism,” a turn from literary history to literary theory.

Measurement as a challenge to literary theory, one could say, echoing a famous essay by Hans Robert Jauss. This is not what I expected from the encounter of computation and criticism; I assumed, like so many others, that the new approach would change the history, rather than the theory of literature ….

Measurement challenges literary theory because it asks us to “operationalize” existing critical concepts — to say, for instance, exactly how we know that one character occupies more “space” in a work than another. Are we talking simply about the number of words they speak? or perhaps about their degree of interaction with other characters?

Moretti uses Alex Woloch’s concept of “character-space” as a specific example of what it means to operationalize a concept, but he’s more interested in exploring the broader epistemological question of what we gain by operationalizing things. When literary scholars discuss quantification, we often tacitly assume that measurement itself is on trial. We ask ourselves whether measurement is an adequate proxy for our existing critical concepts. Can mere numbers capture the ineffable nuances we assume they possess? Here, Moretti flips that assumption and suggests that measurement may have something to teach us about our concepts — as we’re forced to make them concrete, we may discover that we understood them imperfectly. At the end of the article, he suggests for instance (after begging divine forgiveness) that Hegel may have been wrong about “tragic collision.”

I think Moretti is frankly right about the broad question this pamphlet opens. If we engage quantitative methods seriously, they’re not going to remain confined to empirical observations about the history of predefined critical concepts. Quantification is going to push back against the concepts themselves, and spill over into theoretical debate. I warned y’all back in August that literary theory was “about to get interesting again,” and this is very much what I had in mind.

At this point in a scholarly review, the standard procedure is to point out that a work nevertheless possesses “oversights.” (Insight, meet blindness!) But I don’t think Moretti is actually blind to any of the reflections I add below. We have differences of rhetorical emphasis, which is not the same thing.

For instance, Moretti does acknowledge that trying to operationalize concepts could cause them to dissolve in our hands, if they’re revealed as unstable or badly framed (see his response to Bridgman on pp. 9-10). But he chooses to focus on a case where this doesn’t happen. Hegel’s concept of “tragic collision” holds together, on his account; we just learn something new about it.

In most of the quantitative projects I’m pursuing, this has not been my experience. For instance, in developing statistical models of genre, the first thing I learned was that critics use the word genre to cover a range of different kinds of categories, with different degrees of coherence and historical volatility. Instead of coming up with a single way to operationalize genre, I’m going to end up producing several different mapping strategies that address patterns on different scales.

Something similar might be true even about a concept like “character.” In Vladimir Propp’s Morphology of the Folktale, for instance, characters are reduced to plot functions. Characters don’t have to be people or have agency: when the hero plucks a magic apple from a tree, the tree itself occupies the role of “donor.” On Propp’s account, it would be meaningless to represent a tale like “Le Petit Chaperon Rouge” as a social network. Our desire to imagine narrative as a network of interactions between imagined “people” (wolf ⇌ grandmother) presupposes a separation between nodes and edges that makes no sense for Propp. But this doesn’t necessarily mean that Moretti is wrong to represent Hamlet as a social network: Hamlet is not Red Riding Hood, and tragic drama arguably envisions character in a different way. In short, one of the things we might learn by operationalizing the term “character” is that the term has genuinely different meanings in different genres, obscured for us by the mere continuity of a verbal sign. [I should probably be citing Tzvetan Todorov here, The Poetics of Prose, chapter 5.]

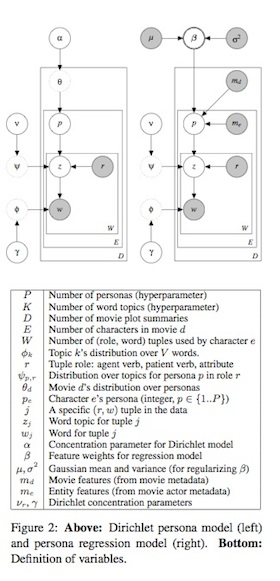

The illustration on the right is a probabilistic graphical model drawn from a paper on the “Latent Personas of Film Characters” by Bamman, O’Connor, and Smith. The model represents a network of conditional relationships between variables. Some of those variables can be observed (like words in a plot summary w and external information about the film being summarized md), but some have to be inferred, like recurring character types (p) that are hypothesized to structure film narrative.

Having empirically observed the effects of illustrations like this on literary scholars, I can report that they produce deep, Lovecraftian horror. Nothing looks bristlier and more positivist than plate notation.

But I think this is a tragic miscommunication produced by language barriers that both sides need to overcome. The point of model-building is actually to address the reservations and nuances that humanists correctly want to interject whenever the concept of “measurement” comes up. Many concepts can’t be directly measured. In fact, many of our critical concepts are only provisional hypotheses about unseen categories that might (or might not) structure literary discourse. Before we can attempt to operationalize those categories, we need to make underlying assumptions explicit. That’s precisely what a model allows us to do.

It’s probably going to turn out that many things are simply beyond our power to model: ideology and social change, for instance, are very important and not at all easy to model quantitatively. But I think Moretti is absolutely right that literary scholars have a lot to gain by trying to operationalize basic concepts like genre and character. In some cases we may be able to do that by direct measurement; in other cases it may require model-building. In some cases we may come away from the enterprise with a better definition of existing concepts; in other cases those concepts may dissolve in our hands, revealed as more unstable than even poststructuralists imagined. The only thing I would say confidently about this project is that it promises to be interesting.

15 replies on “Measurement and modeling.”

Got only part way through when the intense sense of déjà vu kicked in: you could as easily have been quoting from Pickering’s description his first-hand experience of The Mangle of Practice, and the performative dance one undertakes when an ideal (“critical”?) notion meets resistance from the real world.

You know, although I used to do history of science / science studies, I haven’t read Mangle of Practice. Adding that to my reading list along with The System of Professions. Maybe over break I’ll seriously get time to open a few books.

Thanks Ted. I think Moretti’s article sums up (or re-frames in a more nuanced way) something that’s been a common thread in DH discussions for a while: that the act of formalization is itself a pathway to knowledge. Whether it’s an attempt to fit a set of documents into a single TEI standard, or re-creating an ancient society in an agent-based model, attempts by humanists to apply structure to their objects inevitably leads to refinements in theories about the objects themselves. Humanistic formalization is certainly by no means unprecedented (Glen Worthey, Underwood, and others trace roots at least to the Russian formalists), but lots of DH *requires* it, which is why the discussion is steering back in this direction.

Elijah Meeks wrote about ORBIS, the Stanford map of the ancient Roman world: “[T]he beauty of a model is that all of these assumptions are formalized and embedded in the larger argument (in this case, the most efficient ways for different types of actors to move across the Roman world during a particular month). That formalization can be challenged, extended, enhanced and amended by, say, increased historical environmental reconstruction, experimental maritime archaeology or the addition of documented historically attested routes that adjust various abstractions. Rather than a linear text narrative, the model itself is an argument.” (https://dhs.stanford.edu/spatial-humanities/models-as-product-process-and-publication/)

It’s interesting that Moretti should find surprise in a turn from history to criticism, as it seems that both his own work and that of DH at large has been focused on this aspect of DH-inspired (or -required) formalism, and what it implies about our understanding of humanistic sources. The key advantage of us trying to squeeze complex ideas into stupid computers is our need to make explicit what we usually leave implicit – in fact, maybe this is that oft-sought-after property that binds the various schools of DH together.

Very nicely put, and thanks for the apt link to Meeks. I totally agree with what you’re both saying about the uses of formalization.

“Modeling” is actually a relatively new concept for me, and to be honest I’m still getting my head around it, but I am coming to suspect that it’s going to be a central topic of discussion in DH, or maybe just “in the humanities.”

To acknowledge a few people who have been exploring modeling in the humanities well before it crossed my radar: The Women Writers’ Project at Brown organized a conference about data modeling a few years back (http://www.wwp.brown.edu/outreach/conference/kodm2012/), and I think some of the organizers of that conference (Flanders, Jannidis) are continuing to foster discussion about different approaches to modeling. Also, there’s a very provocative (I’d say “classic” but it’s too young to be classic) essay by Willard McCarty in the Blackwell Companion. http://nora.lis.uiuc.edu:3030/companion/view?docId=blackwell/9781405103213/9781405103213.xml&chunk.id=ss1-3-7

I’m curious to see what’s coming with modeling genres, and it’s something I’ve started working on, though it’ll take me a while: The question: Given two writers who work in multiple genres–say poetry, plays, and prose fiction in the same decade, is it possible that the work of the two authors in the *same* genre is more similar than their individual work in multiple genres? I wonder if anyone’s working on this, and it’s kind of a project pipe dream while I’m working on other things…but a place I want to start is the Romantic era “long poem” and see about finding a very quantifiable thing to measure about it… Wondering if I should go with things the stylometers work on, like conjunctions, sentence length, ampersands… My interest is of course in the active verbs and subject-object constructions, or more broadly, the juxtapositioning of setting… but I’m aware this is getting into the indirect measurement trouble you’re describing here. I shall look forward to reading more on the subject.

That’s a really good idea for a test case. I think it can be done, but how easily it can be done probably depends on the nature of the “genres” you’re talking about.

What I’ve seen so far is that it’s not at all hard to separate e.g. novels from plays, and plays from lyric poetry. Even in a case like Walter Scott, where you’ve got an author working across those boundaries, genre can be discerned quite clearly. But I can’t make claims about “more or less similar” because the methods I use aren’t based on general distance metrics; they involve (automatically) selecting features that discriminate these genres and training an algorithm to recognize the boundary between them.

I think things are going to get fuzzier if by “genre” you mean e.g. “science fiction” versus “fantasy fiction.” For instance, when I tried to train a classifier to recognize the gothic, it decided that everything by William Godwin was gothic. Which is possible… but it’s probably a sign that authorship and genre are entangled. You probably know Matt Jockers’ work in Macroanalysis; he’s able to separate the signals statistically, but if I recall rightly, authorship is the stronger signal … for those kinds of “genres.”

I think I remember us testing this in David Hoover’s Authorship Attribution class at DHSI a few years ago, and finding that in some cases, genre overwhelmed authorship.

It probably comes down to what you mean by “similarity”. (I’m no expert, but) in authorship attribution, the strongest authorship signal seems to come from the most frequent 50 words (and, but, or, it, etc.), and if that’s what you’re measuring similarity on, authorship would probably overwhelm most genre signals (unless, perhaps, if you were comparing prose to poetry. Looking at the lesser frequently occurring words (say, doctor, equipment, cancer, etc.), genre might overwhelm authorship. As Underwood mentioned, Jockers’ book deals with this explicitly in some parts.

I suspect the really principled solution to this problem would involve “modeling” again. If we want to separate genre from authorship, it’s possible to explicitly construct a model where a text is conditioned by both variables. Then, if you know authorial attribution in many cases (which after all we do), you can use the model to “factor” authorship “out” of the genre signal.

In other words, we don’t have to approach this as a trial-and-error process. When things get complicated, you can build a model that explicitly represents the complication, and then “solve” the model. This is something I’m learning from David Bamman, and I have to confess that at the moment the math involved in “solving” ad-hoc probabilistic graphical models is beyond me. But I think I may be insane enough to want to try it.

We found something relevant to this question in Pamphlet 1 of the Literary Lab — if you look at its section 6 [Authors vs Genres], it shows that texts written by the same author, though belonging to different novelistic genres [historical novel, Bildungsroman, etc], clustered better by author than by genre. Whether that would also occur once one moves to more drastic discontinuities [lyric, narrative etc] remains to be seen.

[…] the many great points Ted Underwood makes in his recent blog response to Franco Moretti’s latest work, I wanted to flag his brief opening observation about the […]

It is kind of funny (and has been pointed out by John Burrows already) that in stark contrast to Foucault’s reflections on authorship, the author signal proves so overwhelmingly strong. But findings like this compel us to look closer at the relationship between literary concepts like ‚author‘ and what we really measure. ‚Author‘ implies coherence (and disruption of coherence) on very different levels like style, topics, traits, the unity of the author’s ‚work‘, the legal entity, the public image etc. If we use, for example, Delta on a corpus and find a very neat clustering according to author’s names, we are looking at the distance between distributions of (mostly function) words in relation to the average distribution of the corpus, something which cannot be mapped to ‚style‘ without some brute force. At least it is not an aspect of style as we model style in literary studies, where we typically concentrate on forgrounded features (irony and long sentences for Thomas Mann etc.). If we now say we can operationalize literary concepts like this we kind of obscure the fact that this doesn’t seem to be the case in DH fields like computational stylistics, where we experiment with measures and features until we find a successful constellation – and then we start to look for an explanation, then we start to look closer at our model of the phenomenon and try to derive from it our feature. In the case of Delta we can offer one by changing our concept of style to a more abstract one comprising all kind of selections by the author including function words. So in the reality of much of my DH work and that I am hearing and reading about, the process seems to be more entangled changing the concepts we are trying to test in the very act of testing.

I think what you say about “style” is very true, Fotis. The techniques that work for authorship attribution are not necessarily the same thing as what we used to mean by “style.”

Once we’ve grasped this, there are perhaps three different paths we could take. We could simply declare that the algorithms we can get to work == “style.” But I agree with you, that’s unsatisfactory.

Another path is visible in pamphlet 5 at the Stanford Literary Lab. There they make a conscientious effort to identify a linguistic phenomenon that comes closer to lining up with our original intuitions about “style.” If that project succeeded, it would allow us to operationalize the concept in Franco’s sense.

My own view would be, that our intuitions about “style” were never very coherent in the first place. We had a word that felt meaningful — because of strong associations with personality and aesthetic cultivation. But it was always used in contradictory ways: it was a name for the social concept linguists now call “register,” and for other variations of diction associated with genre, as well as for signs of individual authorship. I’m not sure this is a word I want to work hard to rescue. This is what I mean by saying that the attempt to operationalize a concept may cause it to “dissolve in our hands.” We may realize that the concepts we’re trying to model were never as coherent as we thought. But I would consider that a good thing, opening up the possibility of a more richly descriptive vocabulary.

You are certainly right with your scepticism of the vagueness of many uses of concepts in literary studies. But at the moment I wonder whether our measurements will really contribute to a better definition of them or not just disolve them. Even if literary concepts are rather often ‘compound notions’ consisting of heterogeneous elements – as you pointed out convincingly with style (register, genre, individuality) – it is unclear whether and especially how what we measure is related to these elements. Literary concepts of style for example seem to have in common that they focus on those aspects of language use which are foregrounded, for example by deviating from a language norm. If we count something like most frequent words we focus on something different, but we could argue that these are dependent phenomena even we don’t understand the details not exactly (yet). In that case we would have a measurement related to a revised and refined concept of style. Otherwise we would have to replace the old concepts or build a new description language next to it. But as you said – it is an open question and in the end we will have in both cases “a more richly descriptive vocabulary.”

[…] traced from recent pieces by Graham Sack, Franco Moretti, and Ted Underwood (here, here, and here) all take generous and exploratory positions toward modeling from the perspective of the […]

Is the process of “operationalising a concept” is same as the “hermenuetic gap” described below.

Qouting from Begning Theory by Peter Barry chapter 11 on stylistics:

//A problem to focus on as you begin your involvement with this topic is the one highlighted by Stanley Fish in his essay ‘What is Stylistics and Why are they Saying such Terrible Things About It?’ Fish says that there is always a gap between the linguistic features identified in the text and

the interpretation of them offered by the stylistician. We might call this problem the hermeneutic gap (‘hermeneutic’ means concerned with the act of interpretation). For instance, it may be said that an utterance uses a large number of passive verbs – those patterned ‘I have been informed that …’: this is the linguistic feature. These passives, we may then be told, indicate a degree of evasiveness in the text: this is the interpretation.

The difficulty is knowing how we can be sure that there is a link between the use of the passive and evasiveness. Can we be sure that the user of the passive is usually being evasive in some way, for instance, trying to conceal the identity of the informant, and, so to speak, to conceal that concealment? (Was I being evasive when I used passives in the previous paragraph?) If the passive is only sometimes evasive, what are the circumstances which make it so?//

Atleast to me they both sound very similar. I think it will be interesting to analyse this relation further like can we try to close this hermeneutic gap by using computational methods? is operationalising the method is same as trying to bridge the hermeneutic gap?