While I’m fascinated by cases where the frequencies of two, or ten, or twenty words closely parallel each other, my conscience has also been haunted by a problem with trend-mining — which is that it always works. There are so many words in the English language that you’re guaranteed to find groups of them that correlate, just as you’re guaranteed to find constellations in the night sky. Statisticians call this the problem of “multiple comparisons”; it rests on a fallacy that’s nicely elucidated in this classic xkcd comic about jelly beans.

Simply put: it feels great to find two conceptually related words that correlate over time. But we don’t know whether this is a significant find, unless we also know how many potentially related words don’t correlate.

One way to address this problem is to separate the process of forming hypotheses from the process of testing them. For instance, we could use topic modeling to divide the lexicon up into groups of terms that occur in the same contexts, and then predict that those terms will also correlate with each other over time. In making that prediction, we turn an undefined universe of possible comparisons into a finite set.

Once you create a set of topics, plotting their frequencies is simple enough. But plotting the aggregate frequency of a group of words isn’t the same thing as “discovering a trend,” unless the individual words in the group actually correlate with each other over time. And it’s not self-evident that they will.

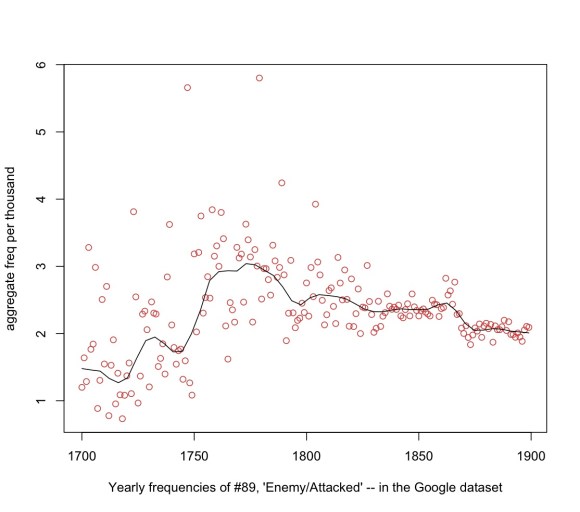

So I decided to test the hypothesis that they would. I used semi-fuzzy clustering to divide one 18c collection (TCP-ECCO) into 200 groups of words that tend to appear in the same volumes, and then tested the coherence of those topics over time in a different 18c collection (a much-cleaned-up version of the Google ngrams dataset I produced in collaboration with Loretta Auvil and Boris Capitanu at the NCSA). Testing hypotheses in a different dataset than the one that generated them is a way of ensuring that we aren’t simply rediscovering the same statistical accidents a second time.

To make a long story short, it turns out that topics have a statistically significant tendency to be trends (at least when you’re working with a century-sized domain). Pairs of words selected from the same topic correlated significantly with each other even after factoring out other sources of correlation*; the Fisher weighted mean r for all possible pairs was 0.223, which measured over a century (n = 100) is significant at p < .05.

In practice, the coherence of different topics varied widely. And of course, any time you test a bunch of hypotheses in a row you're going to get some false positives. So the better way to assess significance is to control for the "false discovery rate." When I did that (using the Benjamini-Hochberg method) I found that 77 out of the 200 topics cohered significantly as trends.

There are a lot of technical details, but I'll defer them to a footnote at the end of this post. What I want to emphasize first is the practical significance of the result for two different kinds of researchers. If you're interested in mining diachronic trends, then it may be useful to know that topic-modeling is a reliable way of discovering trends that have real statistical significance and aren’t just xkcd’s “green jelly beans.”

Conversely, if you're interested in topic modeling, it may be useful to know that the topics you generate will often be bound together by correlation over time as well. (In fact, as I’ll suggest in a moment, topics are likely to cohere as trends beyond the temporal boundaries of your collection!)

Finally, I think this result may help explain a phenomenon that Ryan Heuser, Long Le-Khac, and I have all independently noticed: which is that groups of words that correlate over time in a given collection also tend to be semantically related. I've shown above that topic modeling tends to produce diachronically coherent trends. I suspect that the converse proposition is also true: clusters of words linked by correlation over time will turn out to have a statistically significant tendency to appear in the same contexts.

Why are topics and trends so closely related? Well, of course, when you’re topic-modeling a century-long collection, co-occurrence has a diachronic dimension to start with. So the boundaries between topics may already be shaped by change over time. It would be interesting to factor time out of the topic-modeling process, in order to see whether rigorously synchronic topics would still generate diachronic trends.

I haven’t tested that yet, but I have tried another kind of test, to rule out the possibility that we’re simply rediscovering the same trends that generated the topics in the first place. Since the Google dataset is very large, you can also test whether 18c topics continue to cohere as trends in the nineteenth century. As it turns out, they do — and in fact, they cohere slightly more strongly! (In the 19c, 88 out of 200 18c topics cohered significantly as trends.) The improvement is probably a clue that Google’s dataset gets better in the nineteenth century (which god knows, it does) — but even if that’s true, the 19c result would be significant enough on its own to show that topic modeling has considerable predictive power.

Practically, it’s also important to remember that “trends” can play out on a whole range of different temporal scales.

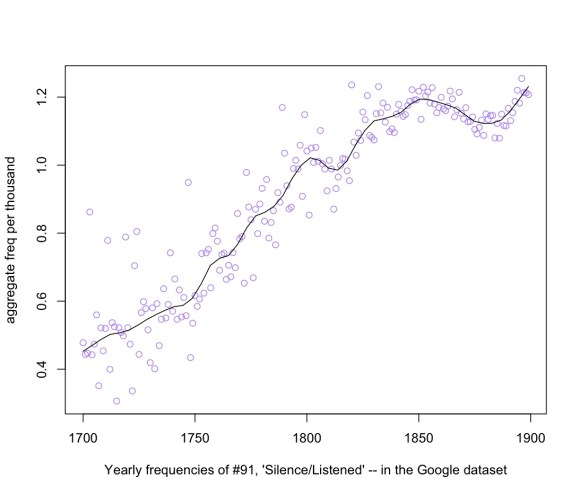

For instance, here’s the trend curve for topic #91, “Silence / Listened,” which is linked to the literature of suspense, and increases rather gradually and steadily from 1700 to the middle of the nineteenth century.

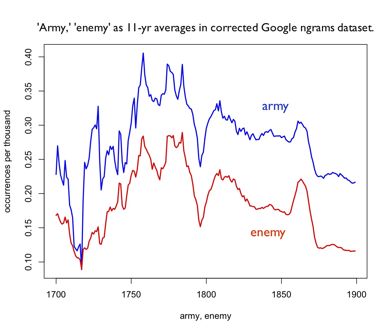

By contrast, here’s the trend curve for topic #89, “Enemy/Attacked,” which is largely used in describing warfare. It doesn’t change frequency markedly from beginning to end; instead it bounces around from decade to decade with a lot of wild outliers. But it is in practice a very tightly-knit trend: a pair of words selected from this topic will have on average 31% of their variance in common. The peaks and outliers are not random noise: they’re echoes of specific armed conflicts.

* Technical details: Instead of using Latent Dirichlet Allocation for topic modeling, I used semi-fuzzy c-means clustering on term vectors, where term vectors are defined in the way I describe in this technical note. I know LDA is the standard technique, and it seems possible that it would perform even better than my clustering algorithm does. But in a sufficiently large collection of documents, I find that a clustering algorithm produces, in practice, very coherent topics, and it has some other advantages that appeal to me. The “semi-fuzzy” character of the algorithm allows terms to belong to more than one cluster, and I use cosine similarity to the centroid to define each term’s “degree of membership” in a topic.

I only topic-modeled the top 5000 words in the TCP-ECCO collection. So in measuring pairwise correlations of terms drawn from the same topic, I had to calculate it as a partial correlation, controlling for the fact that terms drawn from the top 5k of the lexicon are all going to have, on average, a slight correlation with each other simply by virtue of being drawn from that larger group.