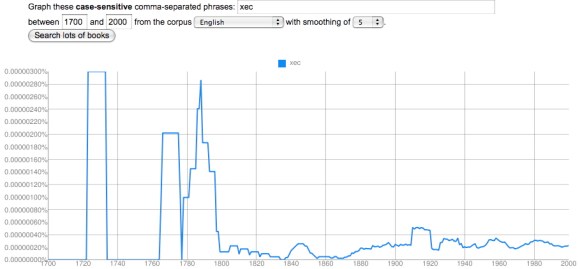

I’ve asserted several times that flaws in optical character recognition (OCR) are not a crippling problem for the English part of the Google dataset, after 1820. Readers may wonder where I get that confidence, since it’s easy to generate a graph like this for almost any short, random string of letters:

It’s true that the OCR process is imperfect, especially with older typography, and produces some garbage strings of letters. You see a lot of these if you browse Google Books in earlier periods. The researchers who created the ngram viewer did filter out the volumes with the worst OCR. So the quality of OCR here is higher than you’ll see in Google Books at large — but not perfect.

I tried to create “xec” as a nonsense string, but there are surprisingly few strings of complete nonsense. It turns out that “xec” occurs for all kinds of legitimate reasons: it appears in math, as a model number, and as a middle name in India. But the occurrences before 1850 that look like the Chicago skyline are mostly OCR noise. Now, the largest of these is three millionths of a percent (10-6). By contrast, a moderately uncommon word like “apprehend” ranges from a frequency of two thousandths of a percent (10-3) in 1700 to about two ten-thousandths of a percent today (10-4). So we’re looking at a spike that’s about 1% of the minimum frequency of a moderately uncommon word.

In the aggregate, OCR failures like this are going to reduce the frequency of all words in the corpus significantly. So one shouldn’t use the Google dataset to make strong claims about the absolute frequency of any word. But “xec” occurs randomly enough that it’s not going to pose a real problem for relative comparisons between words and periods. Here’s a somewhat more worrying problem:

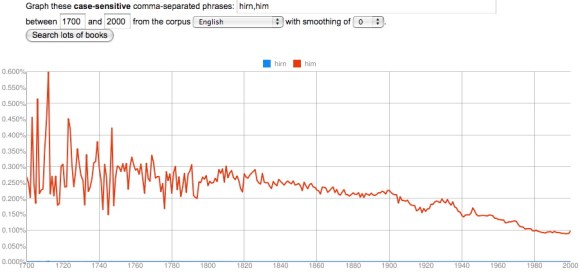

English unfortunately has a lot of letters that look like little bumps, so “hirn” is a very common OCR error for “him.” Two problems leap out here. First, the scale of the error is larger. At its peak, it’s four ten-thousandths of a percent (10-4), which is comparable to the frequency of an uncommon word. Second, and more importantly, the error is distributed very unequally; it increases as one goes back in time (because print quality is poorer), which might potentially skew the results of a diachronic graph by reducing the frequency of “him” in the early 18c. But as you can see, this doesn’t happen to any significant degree:

“Hirn” is a very common error because “him” is a very common word, averaging around a quarter of a percent in 1750. The error in this case is about one thousandth the size of the word itself, which is why “hirn” totally disappears on this graph. So even if we postulate that there are twenty equally common ways of getting “him” wrong in the OCR (which I doubt), this is not going to be a crippling problem. It’s a much less significant obstacle than the random year-to-year variability of sampling in the early eighteenth century, caused by a small dataset, which becomes visible here because I’ve set the smoothing to “0” instead of using my usual setting of “5.”

The take-away here is that one needs to be cautious before 1820 for a number of reasons. Bad OCR is the most visible of those reasons, and the one most likely to scandalize people, but (except for the predictable f/s substitution before 1820), it’s actually not as significant a problem as the small size of the dataset itself. Which is why I think the relatively large size of the Google dataset outweighs its imperfections.

By the way, the mean frequency of all words in the lexicon does decline over time, as the size of the lexicon grows, but that subtle shift is probably not the primary explanation for the downward slope of “him.” “Her” increases in frequency from 1700 to the present; “the” remains largely stable. The expansion of the lexicon, and proliferation of nonfiction genres, does however give us a good reason not to over-read slight declines in frequency. A word doesn’t have to be displaced by anything in particular; it can be displaced by everything in the aggregate.

An even better reason not to over-read changes of 5-10% is just that — frankly — no one is going to care about them. The connection between word frequency and discourse content is still very fuzzy; we’re not in a position to assume that all changes are significant. If the ngram viewer were mostly revealing this sort of subtle variation I might be one of the people who dismiss it as trivial bean-counting. In fact, it’s revealing shifts on a much larger scale, that amount to qualitative change: the space allotted to words for color seems to have grown more than threefold between 1700 and 1940, and possibly more than tenfold in fiction.

This is the fundamental reason why I’m not scandalized by OCR errors. We’re looking at a domain where the minimum threshhold for significance is very high from the start, because humanists basically aren’t yet convinced that changes in frequency matter at all. It’s unlikely that we’re going to spend much time arguing about phenomena subtle enough for OCR errors to make a difference.

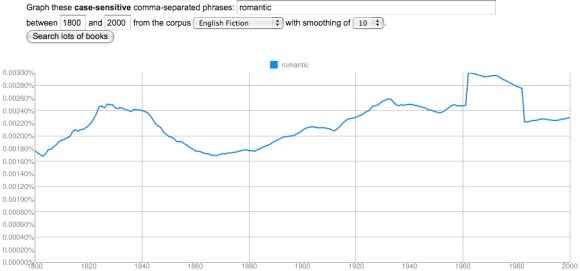

This isn’t to deny that one has to be cautious. There are real pitfalls in this tool. In the 18c, its case sensitivity and tendency to substitute f for s become huge problems. It also doesn’t know anything about spelling variants (antient/ancient,changed/changd) or morphology (run/ran). And once in a great while you run into something like this:

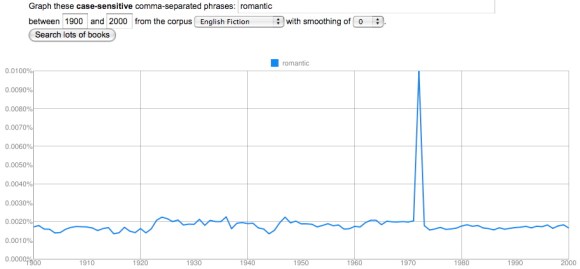

“Hmm,” I thought. “That’s odd. One doesn’t normally see straight-sided plateaus outside the 18c, where the sample size is small enough to generate spikes. Let’s have a bit of a closer look and turn off smoothing.”

Yep, that’s odd. My initial thought was the overwhelming power of the movie Love Story, but that came out 1970, not 1972.

I’m actually not certain what kind of error this is — if it’s an error at all. (Some crazy-looking early 18c spikes in the names of colors turn out to be Isaac Newton’s Opticks.) But this only appears in the fiction corpus and in the general English corpus; it disappears in American English and British English (which were constructed separately and are not simply subsets of English). Perhaps a short-lived series of romance novels with “romantic” in the running header at the top of every page? But I’ve browsed Google Books for 1972 and haven’t found the culprit yet. Maybe this is an ill-advised Easter egg left by someone who got engaged then.

Now, I have to say that I’ve looked at hundreds and hundreds of ngrams, and this is the only case where I’ve stumbled on something flatly inexplicable. Clearly you have to have your wits about you when you’re working with this dataset; it’s still a construction site. It helps to write “case-sensitive” on the back of your hand, to keep smoothing set relatively low, to check different corpora against each other, to browse examples — and it’s wise to cross-check the whole Google dataset against another archive where possible. But this is the sort of routine skepticism we should always be applying to scholarly hypotheses, whether they’re based on three texts or on three million.