The stories I’ve read so far have largely missed the point of the program. They focus instead on the amusing notion that the government “fancies a huge metaphor repository.” And it’s true that the program description reads a bit like a section of English 101 taught by the men from Dragnet. “The Metaphor Program will exploit the fact that metaphors are pervasive in everyday talk and reveal the underlying beliefs and worldviews of members of a culture.” What is “culture,” you ask? Simply refer to section 1.A.3., “Program Definitions”: “Culture is a set of values, attitudes, knowledge and patterned behaviors shared by a group.”

This seems accurate enough, although the combination of precision and generality does feel a little freaky. “Affect is important because it influences behavior; metaphors have been associated with affect.”

The program announcement is similarly precise about the difference between metaphor and metonymy. (They’re not wild about metonymy.)

(3) Figurative Language: The only types of figurative language that are included in the program are metaphors and metonymy.

• Metonymy may be proposed in addition to but not instead of metaphor analysis. Those interested in metonymy must explain why metonymy is required, what metonymy adds to the analysis and how it complements the proposed work on metaphors.

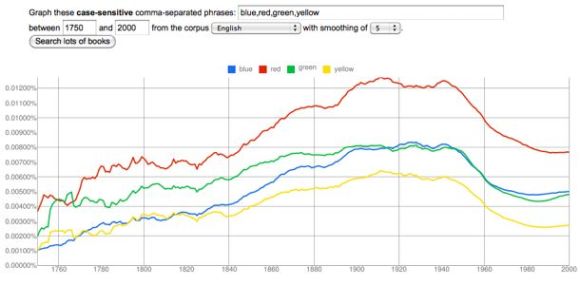

All this is fun, but the program also has a purpose that hasn’t been highlighted by most of the reporting I’ve seen. The second phase of the program will use statistical analysis of metaphors to “characterize differing cultural perspectives associated with case studies of the types of interest to the Intelligence Community.” One can only speculate about those types, but I imagine that we’re talking about specific political movements and religious groups. The goal is ostensibly to understand their “cultural perspectives,” but it seems quite possible that an unspoken, longer-term goal might involve profiling and automatically identifying members of demographic, vocational, or political groups. (IARPA has inherited some personnel and structures once associated with John Poindexter’s Total Information Awareness program.) The initial phase of the metaphor-mining is going to focus on four languages: “American English, Iranian Farsi, Russian Russian and Mexican Spanish.”

Naturally, my feelings are complex. Automatically extracting metaphors from text would be a neat trick, especially if you also distinguished metaphor from metonymy. (You would have to know, for instance, that “Oval Office” is not a metaphor for the executive branch of the US government.) [UPDATE: Arno Bosse points out that Brad Pasanek has in fact been working on techniques for automatic metaphor extraction, and has developed a very extensive archive. Needless to say, I don’t mean to associate Brad with the IARPA project.]

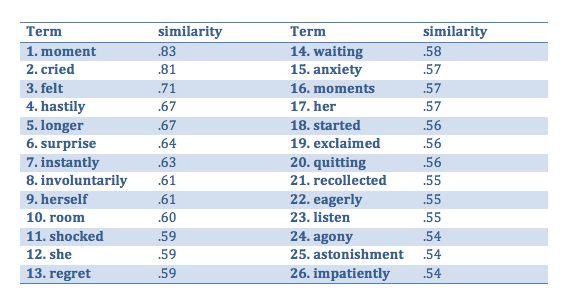

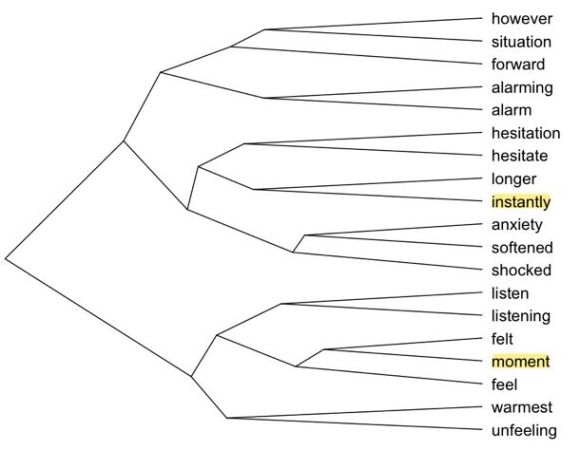

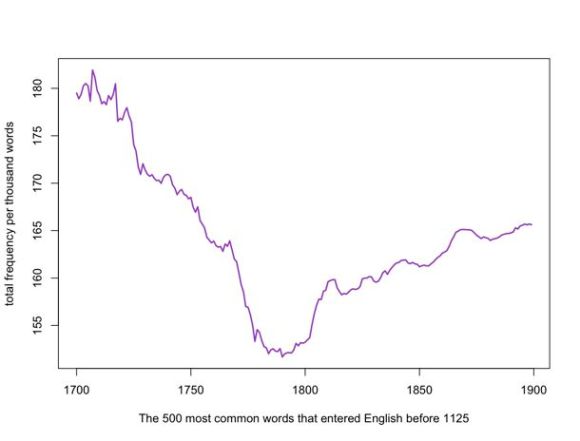

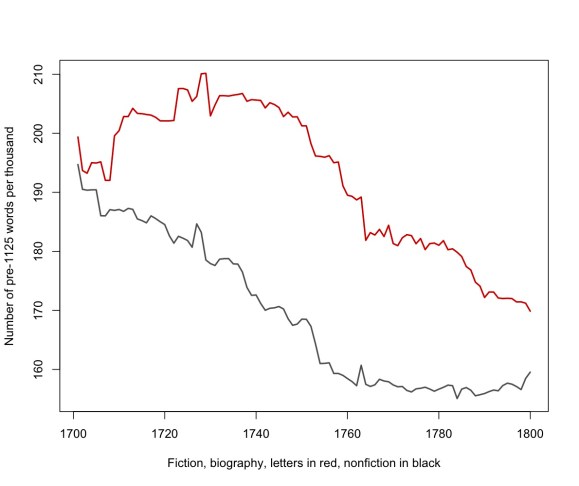

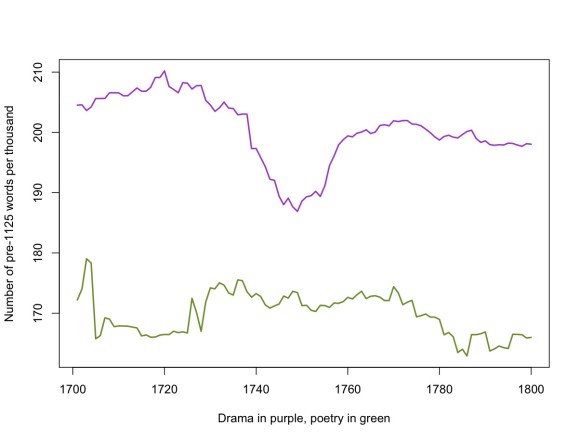

Going from a list of metaphors to useful observations about a “cultural perspective” would be an even neater trick, and I doubt that it can be automated. My doubts on that score are the main source of my suspicion that the actual deliverable of the grant will turn out to be profiling. That may not be the intended goal. But I suspect it will be the deliverable because I suspect that it’s the part of the project researchers will get to work reliably. It probably is possible to identify members of specific groups through statistical analysis of the metaphors they use.

On the other hand, I don’t find this especially terrifying, because it has a Rube Goldberg indirection to it. If IARPA wants to automatically profile people based on digital analysis of their prose, they can do that in simpler ways. The success of stylometry indicates that you don’t need to understand the textual features that distinguish individuals (or groups) in order to make fairly reliable predictions about authorship. It may well turn out that people in a particular political movement overuse certain prepositions, for reasons that remain opaque, although the features are reliably predictive. I am confident, of course, that intelligence agencies would never apply a technique like this domestically.

Postscript: I should credit Anna Kornbluh for bringing this program to my attention.